Soul's Open-Source Digital Humans Now React in Blink of an Eye

Digital Humans Get Instant Reactions with Soul's New Open-Source Tech

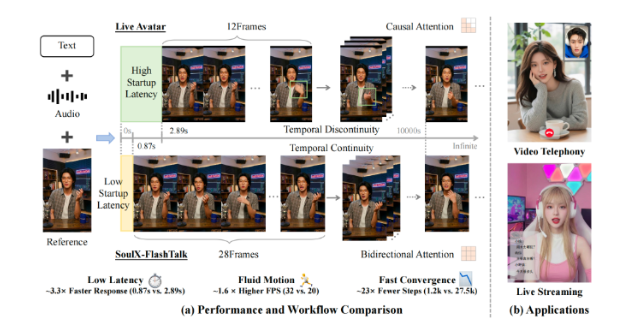

Imagine a virtual assistant that doesn't just understand you, but reacts naturally - with facial expressions and gestures flowing as smoothly as in face-to-face conversation. That future just got closer with Soul AI Lab's groundbreaking release of SoulXFlashTalk, the first open-source digital human model capable of truly real-time interaction.

Blazing Fast Virtual Responses

The numbers tell an impressive story:

- 1.4 billion parameters powering the model

- Under one second latency from input to response

- 32 frames per second animation for fluid movement

"We're not just building talking heads," explains a Soul spokesperson. "These digital humans can engage in natural back-and-forth conversations without that uncanny valley delay."

Open Doors for Developers

In a move that's shaking up the AI community, Soul has made everything publicly available:

- Complete project documentation

- Technical white papers

- Full source code access

- Pretrained model weights

This follows their October release of SoulXPodcast, creating what industry watchers are calling a "voice + vision" open-source powerhouse. For indie developers and startups lacking massive R&D budgets, this could level the playing field dramatically.

More Than Just Code Sharing

The company sees this as part of a larger mission. "Open-source isn't just about posting code online," says the Soul team. "It's about creating an ecosystem where everyone can build on each other's work to push boundaries faster."

Early adopters are already imagining applications far beyond customer service avatars:

- Education: Historical figures debating students in real-time

- Social Media: Personalized digital influencers

- Therapy: Always-available counseling avatars

- Gaming: NPCs with genuinely human reactions

What Comes Next?

With their multi-modal AI strategy gaining momentum, Soul hints at more open-source releases coming soon. Industry analysts predict this could trigger a wave of innovation comparable to when OpenAI first released GPT models to the public.

The implications are staggering - we might be looking at the foundation for entirely new forms of digital interaction that feel less like talking to a machine and more like connecting with another being.

Key Points:

- Lightning-fast reactions: SoulXFlashTalk delivers digital humans that respond naturally without awkward pauses

- Full transparency: Everything from technical docs to model weights now available to all developers

- Ecosystem play: Part of Soul's broader strategy to accelerate AI innovation through open collaboration

- Multi-industry impact: Potential applications span education, mental health, entertainment and beyond