Shanghai AI Lab Unveils Lumina-DiMOO for Multimodal AI

Shanghai AI Lab Launches Lumina-DiMOO

The Shanghai Artificial Intelligence Laboratory, in collaboration with leading universities, has unveiled Lumina-DiMOO, a groundbreaking multimodal generation and understanding model. Dubbed the 'Comprehensive Diffusion Large Language Model,' it aims to revolutionize how AI processes diverse data types.

Innovative Architecture

Lumina-DiMOO employs a novel 'Fully Discrete Diffusion Architecture,' which overcomes traditional limitations in text and image processing. This approach treats all data as objects that can be incrementally 'denoised' and 'generated,' simplifying the model structure while boosting efficiency.

Multimodal Integration

The model maps text, images, and audio into a shared high-dimensional semantic space, leveraging contrastive learning to align relationships between different data types. This enables seamless understanding and generation across modalities.

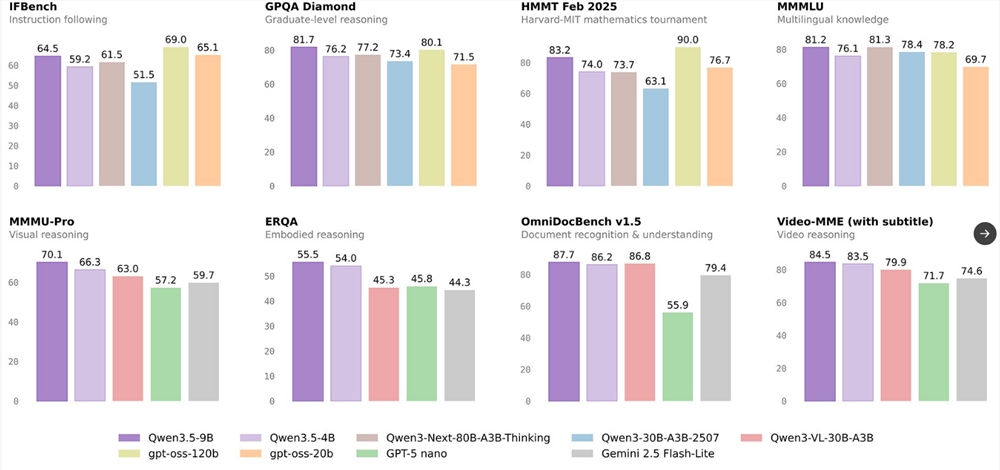

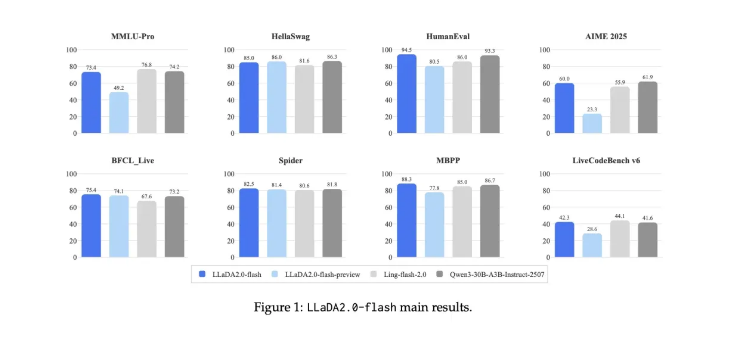

Performance Highlights

- Speed & Accuracy: Lumina-DiMOO achieves high-quality image generation in fewer steps compared to predecessors.

- Versatility: Excels in tasks like text-to-image generation, image analysis, and theme-driven content creation.

- Detail Recognition: Capable of identifying nuanced elements like image atmosphere and fine details.

Future Prospects

The release of Lumina-DiMOO marks a significant leap in multimodal AI. Its adaptability suggests potential across industries, from creative arts to technical diagnostics.

Project Link: GitHub

Key Points:

- 🌟 Fully Discrete Diffusion Architecture enhances efficiency in multimodal data processing.

- 🛠️ Contrastive learning aligns diverse data types for unified understanding.

- 🚀 Exceptional performance in image generation and analysis, with wide-ranging applications.