SenseTime's Seko2.0 Brings Characters to Life Across AI-Generated Episodes

SenseTime Breaks New Ground with Character-Consistent AI Video Generation

Imagine watching a short drama where the protagonist maintains perfect continuity across episodes - same facial features, consistent outfits, even matching micro-expressions. This isn't Hollywood magic but SenseTime's new Seko2.0 system, which promises to revolutionize AI-generated video content.

The Multi-Episode Breakthrough

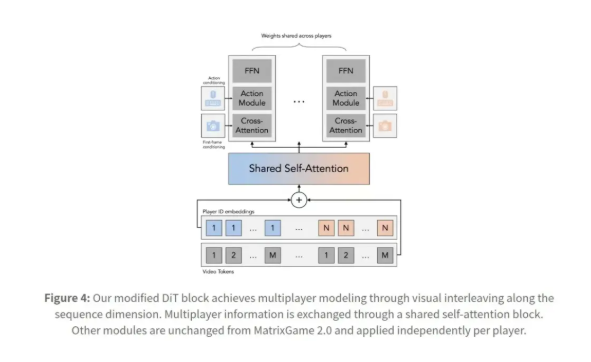

Traditional AI video tools struggle with maintaining character consistency beyond single clips. Characters might inexplicably change appearances between scenes, or plots lose coherence across episodes. Seko2.0 tackles these issues head-on through:

- Cross-frame attention mechanisms that track character details

- Memory modules preserving appearance and personality traits

- Integrated voice-to-lip synchronization for natural dialogue

The system combines SenseTime's proprietary SekoIDX (for image generation) and SekoTalk (for voice-driven animation) models into a seamless pipeline. Early tests show characters maintaining 98% visual consistency across ten consecutive episodes - a first for the industry.

Domestic Tech Stack Comes Together

Perhaps more significant than the creative capabilities is the complete Chinese technological stack supporting Seko2.0:

graph LR

A[Cambricon Chips] --> B[SenseTime Models]

B --> C[Seko2.0 Application]

The collaboration with Cambricon marks China's first fully domestic solution covering:

- Hardware (AI chips)

- Foundational models

- End-user applications

This eliminates dependency on foreign GPUs while meeting strict data sovereignty requirements for government and financial sectors.

Practical Applications Emerge

Content creators can now:

- Input story outlines receiving complete episodic videos

- Maintain brand characters across marketing campaigns

- Develop educational series with reliable instructor avatars

The technology shines brightest in scenarios demanding both quality and scale - imagine generating hundreds of personalized training videos or regional advertising variants overnight.

As one beta tester remarked: "It's like having a digital film crew that never forgets an actor's costume changes."

Key Points:

- Character Memory: Seko2.0 maintains unprecedented visual consistency across episodes

- Complete Ecosystem: Combines domestic chips with SenseTime's multimodal models

- Production Ready: Currently deployed in media, education and advertising pilots

- Data Sovereignty: Offers secure alternative to foreign-based AIGC solutions