Runlayer Emerges from Stealth with $11M Boost to Secure AI Protocols

Runlayer Secures $11M Seed Round to Protect AI Operations

In a significant move for AI security, Runlayer announced today its emergence from stealth mode with an $11 million seed investment. The funding round was co-led by prominent venture firms Khosla Ventures and Felicis.

Addressing Critical Security Gaps

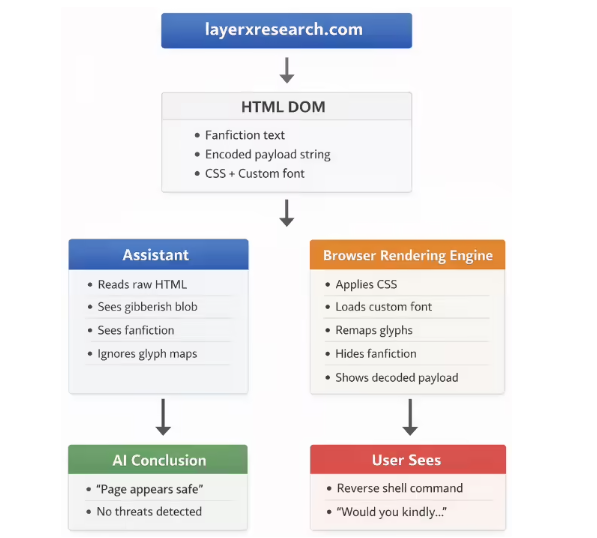

The startup specializes in securing the Model Context Protocol (MCP), an increasingly important framework adopted by tech giants including OpenAI, Microsoft, AWS, and Google. While MCP enables AI agents to autonomously handle data and business operations, its rapid adoption has exposed worrying security vulnerabilities.

"We've seen firsthand how prompt injection attacks and unauthorized data access can compromise systems," said founder Andrew Berman, former Director of AI at Zapier. "Our platform creates essential guardrails for this powerful technology."

Impressive Early Traction

Despite operating quietly for just four months, Runlayer has already onboarded eight major clients - including unicorns Gusto, dbt Labs, Instacart, and Opendoor. The company also scored a coup by bringing aboard David Soria Parra, principal author of the MCP specification, as a consultant.

The security platform combines multiple critical functions:

- Gateway protection against malicious prompts

- Real-time threat detection

- Comprehensive audit logging

- Fine-grained permission controls

How It Works

The system employs an innovative "Okta-style" directory that allows IT teams to pre-authorize MCP servers and link them directly to employee identities. This creates clear accountability chains where AI agent permissions mirror user privileges exactly.

"What excites me most is solving the observability blind spots," Berman explained. "When something goes wrong with an AI operation today, companies often struggle to trace why or how."

The platform integrates seamlessly with existing identity providers like Okta and Microsoft Entra while generating compliance-ready audit trails.

Rapid Development Timeline

Berman's team moved remarkably fast - conceiving the idea in August after recognizing security gaps while building early MCP implementations at Zapier. Within four months they developed their product prototype and secured paying customers.

The fresh capital will fuel engineering team expansion ahead of their General Availability launch later this year. Future plans include supporting private on-premises deployments and multi-cloud environments.

Key Points:

- Runlayer raises $11M seed round co-led by Khosla Ventures & Felicis

- Solves critical security gaps in rapidly adopted Model Context Protocol

- Already serves eight major clients including Instacart & Opendoor

- Founder brings deep experience from Zapier's AI division

- Platform combines gateway protection with identity-based controls