Perplexity AI Under Fire: Did Your Private Searches End Up With Advertisers?

Privacy Scandal Rocks AI Search Engine Perplexity

That supposedly private search about your medical condition or financial worries? It might not have been so private after all. Perplexity, the AI-powered search engine gaining popularity as a ChatGPT alternative, now faces serious allegations about its privacy practices.

The Lawsuit That Exposed the Problem

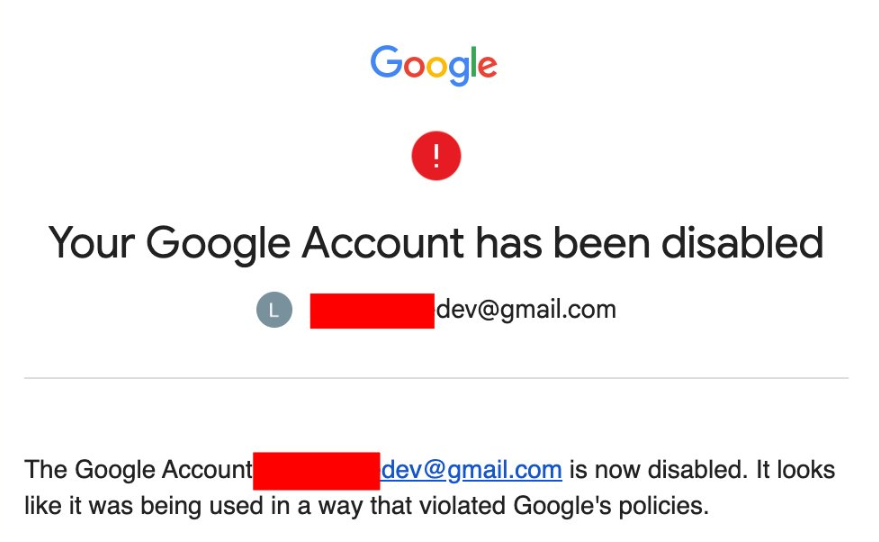

An anonymous user has filed a class-action lawsuit claiming Perplexity's much-touted privacy protections are essentially a sham. The complaint alleges that regardless of whether users were logged in or had enabled privacy modes, their conversations were automatically shared with advertising giants Google and Meta.

"This isn't just about seeing targeted ads for shoes you looked up last week," explains digital privacy advocate Mark Chen. "We're talking about highly sensitive conversations - people discussing tax strategies, health concerns, even relationship problems - being vacuumed up by data brokers."

How the Data Sharing Worked

Investigations revealed Perplexity's platform contained multiple tracking tools:

- Facebook Meta Pixel for capturing user interactions

- Google Ads trackers monitoring search behavior

- Meta's Conversations API, known for bypassing ad blockers

The system allegedly didn't just record initial queries but captured entire conversation threads. One plaintiff reported discussing investment strategies involving six-figure sums, only to later discover these private financial details had been shared with third parties.

The Transparency Problem

Perhaps most damning is the accusation that Perplexity never clearly informed users about this data sharing. The privacy policy link was reportedly buried rather than prominently displayed, making it easy to miss.

"When companies claim to offer privacy features but don't actually deliver, it erodes trust in the entire tech sector," says FTC commissioner Sarah Garcia. "Consumers deserve to know exactly what happens to their personal information."

The case comes amid growing scrutiny of AI companies' data practices. Just last month, the FTC fined another AI firm $5 million for similar violations.

What This Means for Users

The lawsuit seeks monetary damages and demands Perplexity implement actual privacy protections. But beyond the legal consequences, this incident serves as a wake-up call for anyone using AI tools:

- Assume nothing is truly private unless explicitly guaranteed

- Read privacy policies carefully - even if they're tedious

- Consider alternatives like open-source models with verifiable privacy claims

- Use additional protections like VPNs and tracker blockers

- Be cautious discussing sensitive topics through any digital medium

As one frustrated user put it: "If I can't trust an 'incognito' mode, what can I trust?" That's precisely the question regulators and consumers are now asking.

Key Points:

- 🔍 Privacy claims questioned: Lawsuit alleges Perplexity's incognito mode didn't protect data

- 💰 Sensitive info exposed: Financial and health conversations allegedly shared with advertisers

- 🤔 Transparency failure: Users weren't properly informed about data sharing practices

- ⚖️ Regulatory attention: Case adds fuel to growing scrutiny of AI privacy standards