NVIDIA Bets Big on Groq Tech for New AI Chip, Wins Back OpenAI

NVIDIA Doubles Down on AI Inference With Groq-Powered Chip

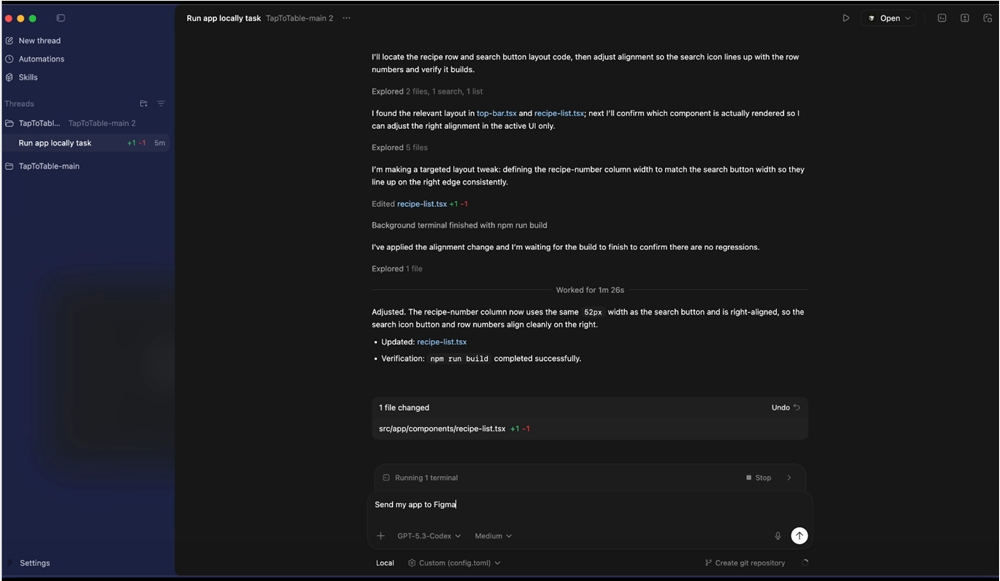

The AI hardware landscape is about to get shaken up. NVIDIA, long the undisputed leader in AI training chips, is making its boldest play yet for the lucrative inference market. Sources reveal the company will debut a specialized processor at its upcoming GTC developer conference that incorporates revolutionary technology from Silicon Valley darling Groq.

Solving AI's Speed Problem

At the heart of this strategic partnership lies Groq's innovative language processing unit (LPU) architecture. While NVIDIA's GPUs excel at training massive models, Groq's approach delivers unprecedented efficiency when generating AI responses - solving what industry insiders call "the latency bottleneck."

The acquisition didn't come cheap. NVIDIA reportedly shelled out $2 billion for Groq's IP and top engineering talent. But analysts say it could pay dividends by addressing growing customer frustration with sluggish chatbot interactions and high operational costs.

OpenAI Comes Full Circle

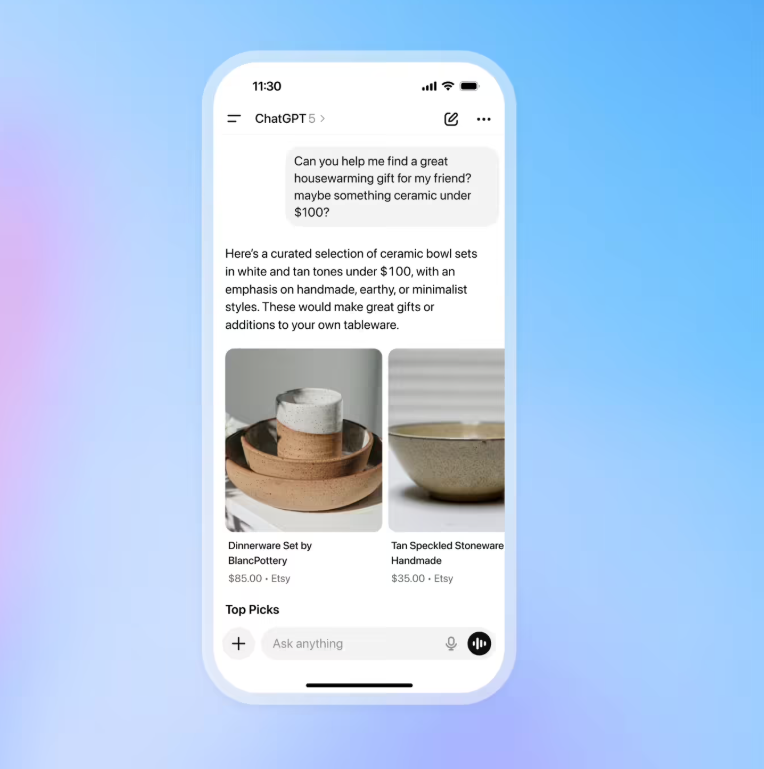

Perhaps the most telling endorsement comes from OpenAI, which had begun exploring alternatives amid concerns about GPU expenses. The ChatGPT maker had signed deals with startups like Cerebras before reversing course to become one of NVIDIA's first major customers for the new platform.

Insiders suggest OpenAI plans to use these chips to supercharge its Codex programming assistant, putting pressure on rivals like Anthropic. The move highlights how critical hardware performance has become in the race to deliver seamless AI experiences.

The Inference Arms Race Heats Up

The stakes couldn't be higher as cloud giants like Google and Amazon develop their own specialized chips. What began as NVIDIA's market to lose has become a free-for-all, with each player betting big on different architectural approaches.

With inference workloads projected to eclipse training demands, this NVIDIA-Groq collaboration could reshape competitive dynamics overnight. One thing's certain: the battle for AI supremacy will be won not just in algorithms, but in silicon.

Key Points:

- NVIDIA integrating Groq's LPU technology into new inference-focused chip

- $2 billion deal brings critical IP and engineering talent

- OpenAI commits as launch customer after flirting with competitors

- Move targets growing frustration with slow response times

- Intensifies competition with cloud providers' custom silicon efforts