Mistral AI's Small4: A Versatile Powerhouse for Developers

Mistral AI Breaks New Ground with Small4 Release

In the competitive world of open-source AI models, European contender Mistral AI has made another impressive leap forward. Their newly launched Small4 model represents a significant milestone - the company's first truly versatile large language model that brings together multiple advanced capabilities in a single package.

The All-in-One Solution

What makes Small4 stand out? For the first time, developers get flagship-level reasoning, multimodal understanding, and robust programming capabilities without having to switch between specialized models. "It's like having your cake and eating it too," remarked one early tester. The model's versatility could potentially streamline workflows for teams working across different AI applications.

Under the Hood: Technical Breakthroughs

The secret to Small4's efficiency lies in its advanced Mixture of Experts (MoE) architecture:

- Smart parameter usage: With 119 billion total parameters but only 6 billion activated at any time, it achieves impressive performance without unnecessary computational overhead

- Expanded memory: A massive 256k context window means it can digest entire technical manuals or complex codebases in one go

- Flexible operation modes: Users can toggle between quick responses for simple queries and deep reasoning for complex problems

- Open-source advantage: Released under the permissive Apache 2.0 license, making it accessible to a wide range of developers

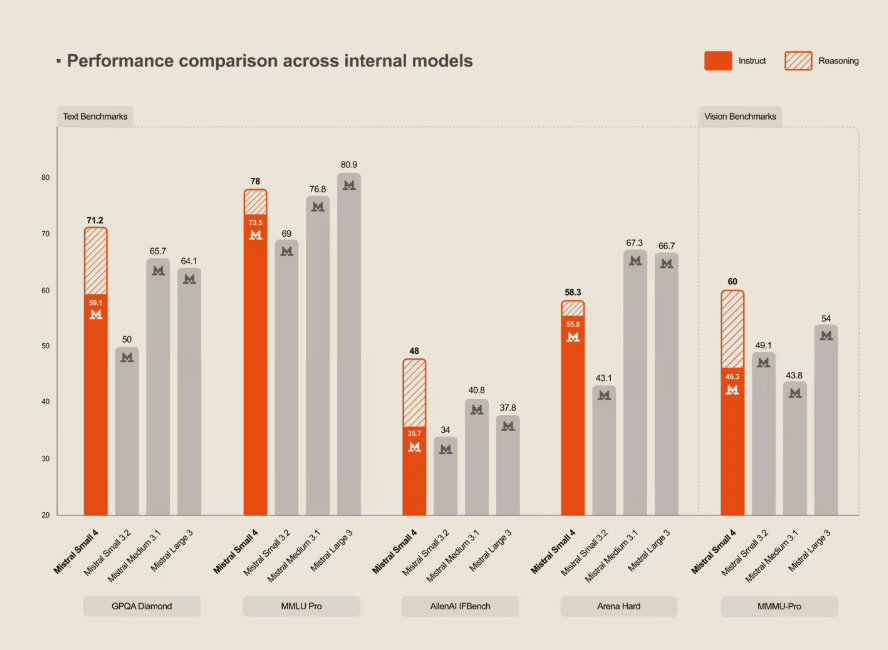

Performance That Turns Heads

Benchmark tests show Small4 isn't just versatile - it's fast. Compared to its predecessor:

- Response times dropped by 40% in latency-optimized mode

- Throughput tripled in high-demand scenarios The model holds its own against competitors too, matching OpenAI's GPT-OSS120B in key performance metrics.

Hardware Considerations

To get the most from Small4, Mistral recommends:

- Minimum setup: 4× HGX H100 or 1× DGX B200 systems

- Optimal configuration: 4× HGX H200 or 2× DGX B200 clusters These requirements reflect the model's power while keeping it accessible to serious development teams.

The release positions Mistral as a serious player in the open-source AI space, offering developers an attractive alternative to proprietary solutions. As one industry analyst put it: "This could change how teams approach multi-disciplinary AI projects."