Mistral AI's New Small4 Model: A Versatile Powerhouse for Developers

Mistral AI Breaks New Ground with Small4 Release

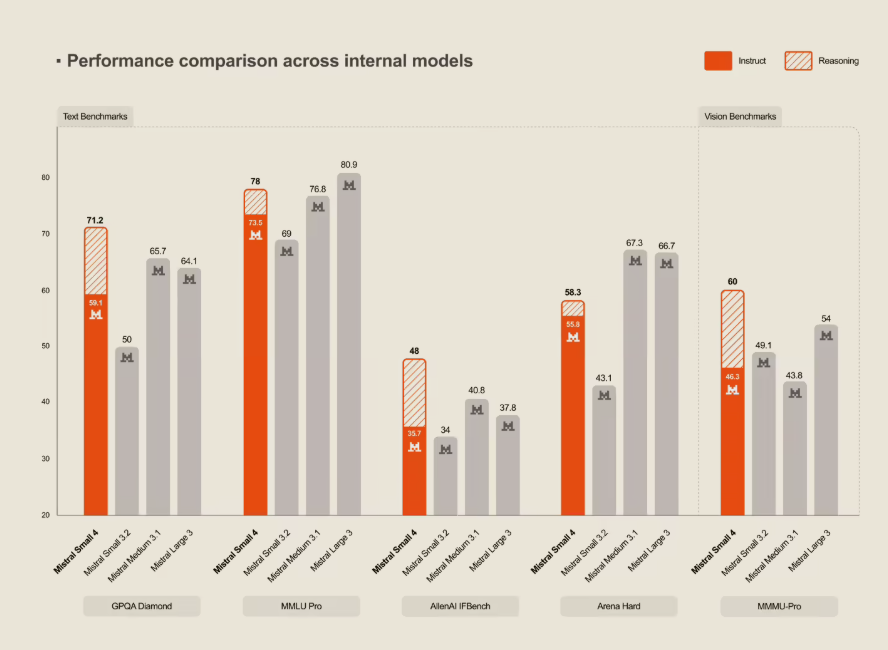

European AI research lab Mistral continues to make waves in the open-source community with its latest release - the highly anticipated Small4 model. This isn't just another incremental update; it represents a significant leap forward in multifunctional AI capabilities.

One Model to Rule Them All

What sets Small4 apart is its remarkable versatility. For the first time, developers get flagship-level reasoning, multimodal understanding, and robust programming capabilities wrapped into a single package.

"We've eliminated the need to choose between specialized models," explains a Mistral spokesperson. "Small4 delivers comprehensive performance across multiple domains without compromise."

Under the Hood

The model's impressive capabilities stem from its advanced architecture:

- MoE Design: Using a Mixture of Experts approach with 119B total parameters (only 6B active at any time)

- Expanded Memory: A generous 256k context window handles technical documents and large codebases with ease

- Dual Modes: Switch between fast response and deep reasoning depending on your needs

- Open Access: Released under Apache 2.0 license for maximum community accessibility

Performance metrics show dramatic improvements over previous versions. In latency-optimized mode, completion times dropped by 40%, while throughput mode triples request capacity compared to Small3. Independent benchmarks place it on par with OpenAI's GPT-OSS120B across key tests.

Hardware Considerations

To run Small4 effectively, Mistral recommends:

- Minimum: 4× HGX H100 or 1× DGX B200

- Optimal: 4× HGX H200 or 2× DGX B200 configuration

The hardware requirements reflect the model's sophistication but remain accessible to serious developers and organizations.

What This Means for AI Development

The release strengthens Mistral's position as Europe's leading open-source AI lab while challenging the dominance of larger tech companies in the space. By combining multiple capabilities in one efficient package, Small4 could simplify development workflows and accelerate innovation.

Key Points:

- First truly versatile model from Mistral combining reasoning, multimodal, and programming features

- MoE architecture balances performance with efficiency (119B total/6B active parameters)

- Significant performance gains over previous versions (40% faster completion times)

- Open-sourced under Apache 2.0 license for community access and development