Mistral AI's New Small4 Model: A Swiss Army Knife for Developers

Mistral AI Raises the Bar with Small4 Release

In the fast-moving world of open-source AI, Paris-based Mistral AI has just made a significant leap forward. Their newly launched Small4 model isn't just another incremental update - it's what developers have been waiting for: a truly general-purpose tool that doesn't force painful compromises.

Breaking the Specialization Trade-off

For years, developers faced a frustrating choice: pick a model excelling at one task (like coding) but struggling with others, or settle for mediocre all-around performance. Small4 changes this equation by delivering:

- Flagship-level reasoning comparable to proprietary models

- Multimodal understanding that handles text, images, and more

- Programming prowess that can navigate complex codebases

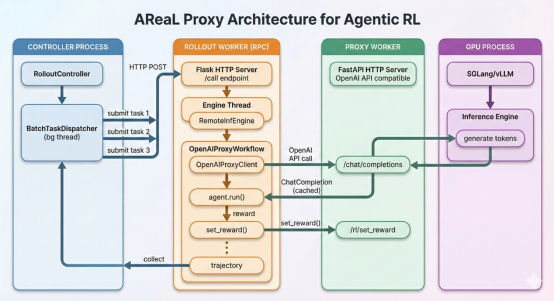

The secret sauce? An innovative MoE (Mixture of Experts) architecture that activates only the necessary 6B of its total 119B parameters for any given task. This means you get top-tier performance without paying for unnecessary computational overhead.

Practical Advantages That Matter

What does this mean in real-world terms? Imagine working with:

- Technical documentation spanning hundreds of pages (thanks to that massive 256k context window)

- Complex programming tasks requiring deep code understanding

- Multimodal projects blending text and visual elements

All without switching between different specialized models. The efficiency gains are tangible too - Small4 completes tasks 40% faster than its predecessor in latency-optimized mode and handles three times as many requests per second when throughput matters most.

Hardware Considerations for Optimal Performance

To get the most from Small4, Mistral recommends:

- Minimum: 4× HGX H100 or 1× DGX B200 GPUs

- Recommended: 4× HGX H200 or 2× DGX B200 configurations

The choice between these setups depends on your specific needs - whether you prioritize cost-efficiency or peak performance.

Why This Release Matters

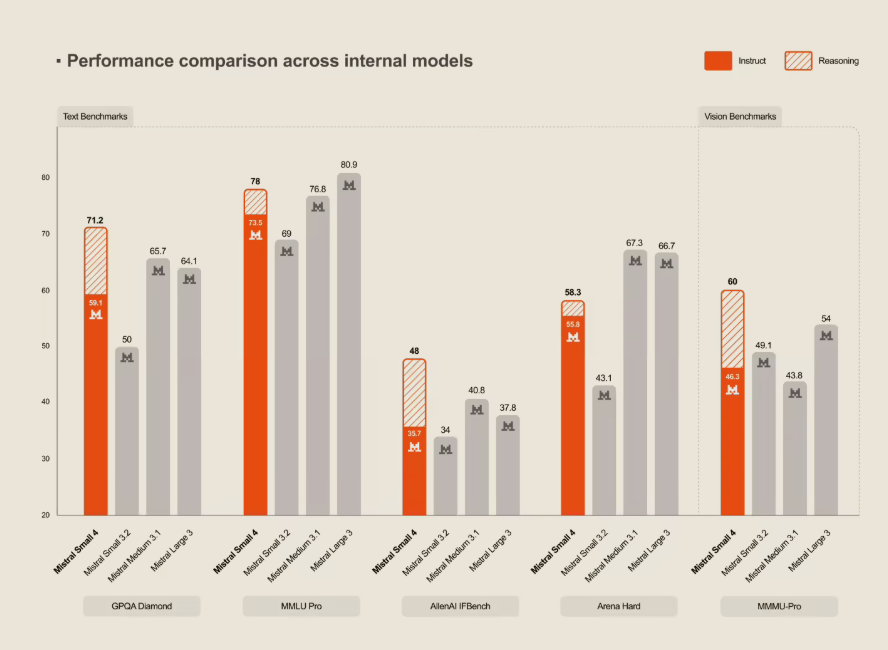

The tech community has responded enthusiastically to Mistral's commitment to open-source ideals (Apache 2.0 license) combined with cutting-edge capabilities. In benchmark tests against other leading models including OpenAI's offerings, Small4 holds its own while remaining fully accessible to developers everywhere.

As AI applications grow more complex and interconnected, tools like Small4 that eliminate specialization trade-offs will become increasingly valuable. It's not just another model release - it's a glimpse into how versatile our AI tools might soon become.

Key Points:

- First true general-purpose model from Mistral combining reasoning, multimodal, and programming capabilities

- MoE architecture (119B total params, 6B active) balances power with efficiency

- 256k context window handles large documents and codebases

- 40% faster than previous version in latency mode

- Open-source under Apache 2.0 license