Microsoft Unveils Phi-4: A Nimble AI That Sees and Thinks Like Humans

Microsoft's New Phi-4 AI Blends Vision with Reasoning

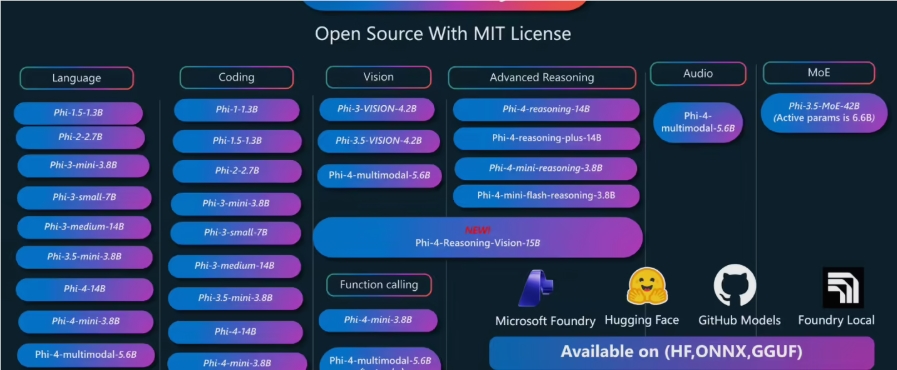

In a significant leap for artificial intelligence, Microsoft has released Phi-4-Reasoning-Vision-15B - an open-source model that marries high-resolution visual processing with sophisticated reasoning abilities. This compact yet powerful system represents the tech giant's latest innovation in their Phi series.

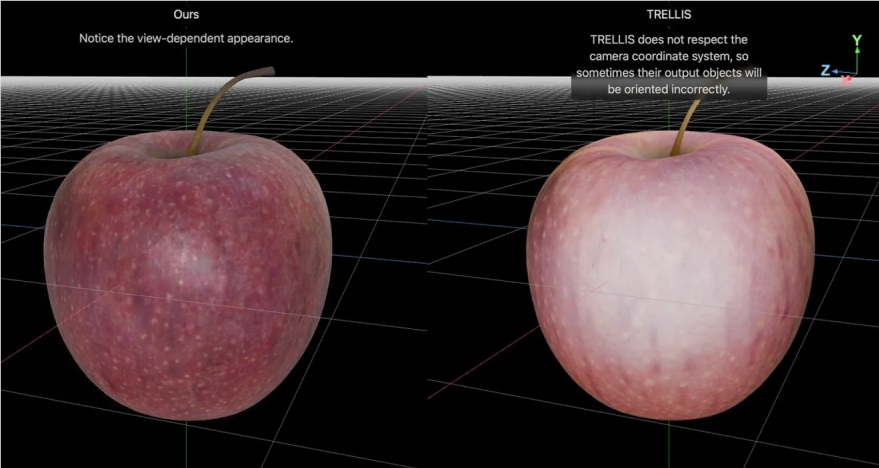

Beyond Simple Image Recognition

What sets Phi-4 apart isn't just its ability to see images clearly, but how it interprets them. Traditional computer vision systems might identify objects in a photo, but Phi-4 goes further - analyzing relationships between elements and drawing logical conclusions. Imagine an AI that doesn't just spot charts in a document, but actually understands what the data means.

"This isn't your grandfather's image recognition software," explains Dr. Lisa Chen, an AI researcher at Stanford. "Phi-4 approaches visual information the way humans do - noticing patterns, making connections, and applying context."

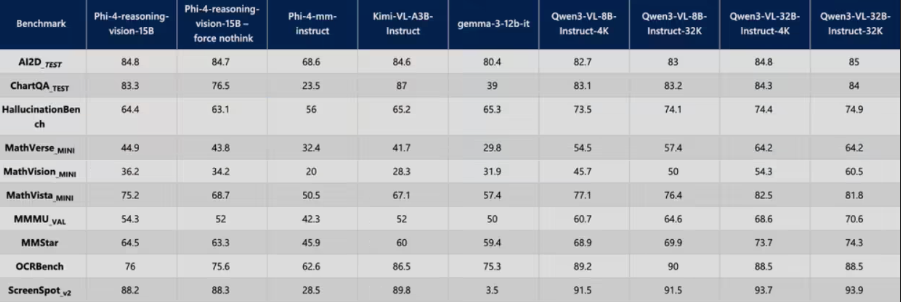

Caption: Non-reasoning mode enables quick responses for tasks like OCR

Caption: Non-reasoning mode enables quick responses for tasks like OCR

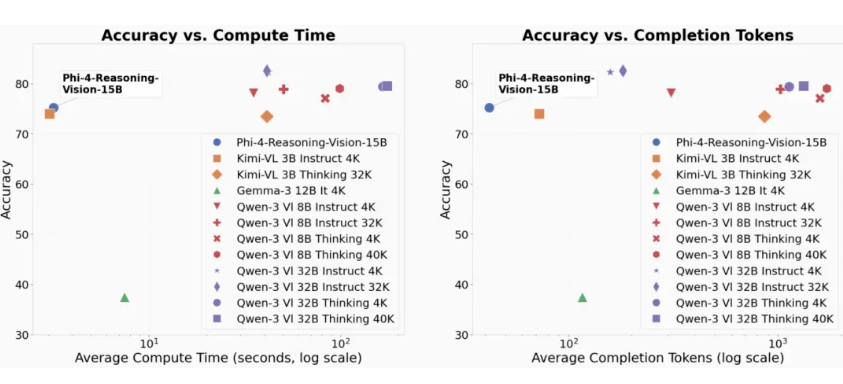

Two Brains Are Better Than One

The model's secret weapon lies in its adaptive thinking modes:

- Quick Draw Mode: For straightforward tasks like reading text or locating interface elements, Phi-4 delivers lightning-fast results.

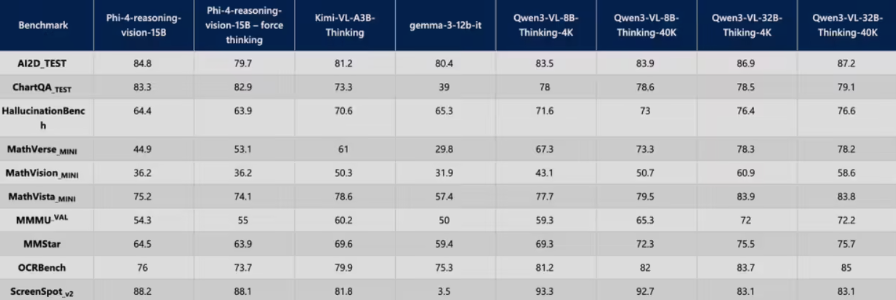

- Deep Think Mode: When faced with complex problems requiring step-by-step analysis (think math proofs or logical puzzles), the AI shifts gears to methodical reasoning.

This flexibility makes Phi-4 particularly valuable for:

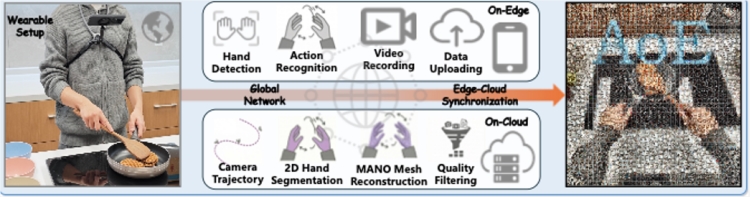

- Automated data analysis from charts and graphs

- Intelligent UI testing and interaction

- Educational tools that explain visual concepts

- Accessibility applications that describe complex images

Caption: Reasoning mode activates multi-step analysis chains

Caption: Reasoning mode activates multi-step analysis chains

Practical Magic

The implications extend beyond technical demonstrations. Consider these real-world scenarios:

- A designer uploads a website mockup with the instruction "Make all clickable elements blue" - Phi-4 identifies every button and link automatically.

- Researchers feed scientific charts into the system - it extracts trends and relationships without manual data entry.

- Educators create interactive lessons where students can ask questions about diagrams and get intelligent responses.

The model outputs standardized coordinates for UI elements, allowing other systems to interact with interfaces naturally - clicking buttons, scrolling pages, or filling forms based on simple instructions.

Key Points:

✅ Combines visual processing with contextual reasoning – a rare pairing in AI models

✅ Open-source availability lowers barriers for developer experimentation

✅ Dual-mode operation balances speed with depth as needed

✅ Particularly suited for automating interface interactions and data analysis

✅ Potential applications span education, accessibility, design automation