Meituan's LongCat-Next Blurs the Lines Between Seeing, Hearing and Understanding

Meituan's New AI Sees the World Like We Do

Imagine an artificial intelligence that doesn't just process text, but sees images and hears sounds with the same natural fluency. That's the promise of LongCat-Next, Meituan's newly unveiled multimodal model that breaks down the artificial barriers between different types of information.

The Tech Behind the Breakthrough

At its core lies the DiNA (Discrete Native Autoregressive) architecture - think of it as giving AI a universal translator for sensory input. Here's what makes it special:

- One Brain for All Tasks: Whether analyzing a photo, transcribing speech or reading text, LongCat-Next uses identical neural pathways rather than switching between specialized modules.

- Understanding = Creating: The same mechanism that helps it comprehend a financial chart also generates new images - a symmetry that surprised even its developers.

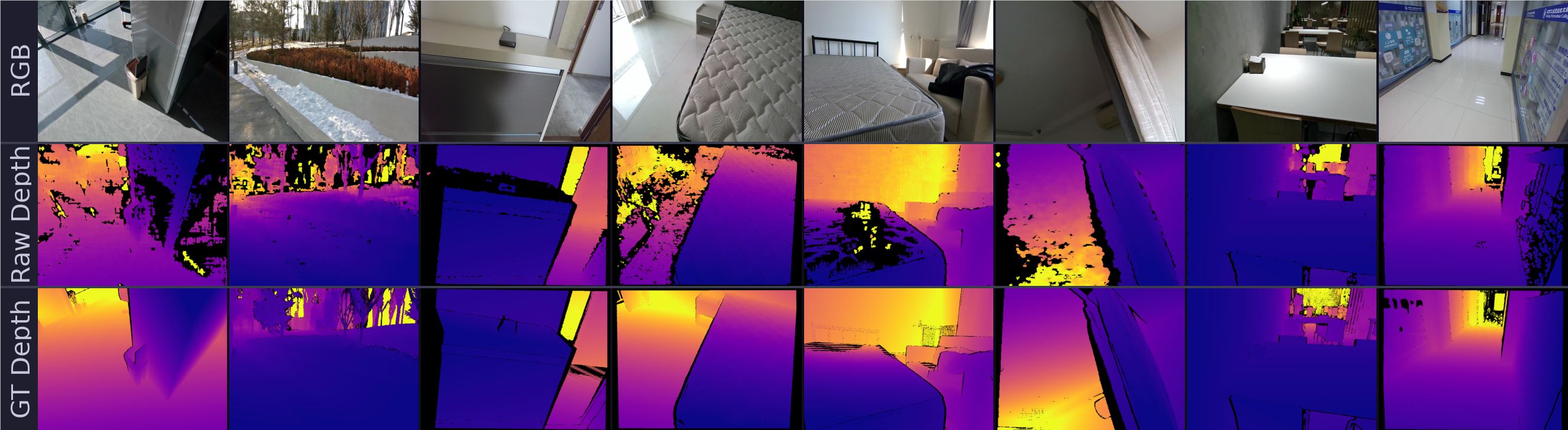

- Pixel Perfect Compression: Through an innovative technique called dNaViT, the model can shrink visual data 28-fold without losing crucial details like fine print or spreadsheet figures.

Real-World Performance That Turns Heads

Early benchmarks suggest this isn't just theoretical:

- Outperformed specialized document analysis tools on dense financial reports

- Scored 83.1 on visual math problems (MathVista), showing rare logical reasoning skills

- Maintains top-tier language abilities while adding real-time speech generation

"We're moving beyond language-centric AI," explains a Meituan researcher. "When an algorithm treats vision and hearing as native capabilities rather than add-ons, everything changes."

Why This Matters Beyond the Lab

The implications stretch far beyond technical benchmarks. By giving AI a unified way to process reality - much like humans do - we're closer to assistants that can:

- Instantly explain complex diagrams during video calls

- Generate reports combining verbal explanations with supporting visuals

- Develop true situational awareness in robotics

Meituan has open-sourced both the model and its visual tokenizer, inviting developers to experiment with this compact but powerful architecture. As one early tester remarked: "It's not perfect yet, but it finally feels like we're teaching machines to experience the world rather than just process it."

Key Points:

- Native Multimodality: Processes images, speech and text as equal inputs

- DiNA Architecture: Unified neural framework eliminates modality switching

- Surprising Versatility: Excels at both understanding and generation tasks

- Open Access: Model and tools available for community development