Meituan's LongCat-Next AI Now Sees and Hears Like Humans Do

Meituan Breaks New Ground With Multimodal AI That Thinks Like Humans

In a move that could redefine how artificial intelligence interacts with our world, Meituan has launched LongCat-Next - a model that processes vision, sound and text as naturally as humans process language. Released on April 3, this technology marks a significant departure from current AI systems that typically treat different types of information separately.

The Brain Behind the Breakthrough

At the heart of LongCat-Next lies the innovative DiNA (Discrete Native Autoregressive) architecture. Think of it as giving AI a universal translator for all its senses:

- One brain for all tasks: Whether reading text, analyzing images or understanding speech, the model uses identical neural pathways instead of separate specialized modules.

- Understanding equals creating: The same process that lets it comprehend a paragraph also enables it to generate realistic images - a symmetry that boosts learning efficiency.

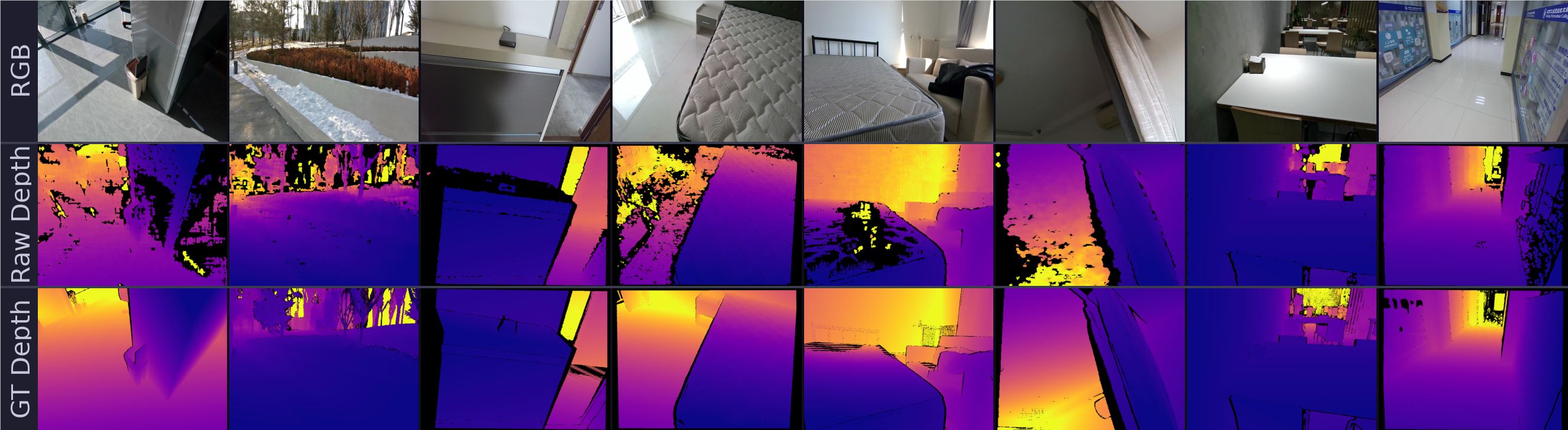

- Pixel-perfect compression: Through an advanced technique called dNaViT Visual Tokenizer, it can shrink high-resolution images by 28 times without losing crucial details like text in financial reports.

"This isn't just another incremental improvement," explains Dr. Wei Zhang, lead researcher on the project. "We're fundamentally changing how AI perceives reality by giving it something akin to human intuition."

Putting Performance to the Test

Early benchmarks suggest LongCat-Next isn't just theoretically impressive - it delivers where it counts:

- Outperformed specialized document analysis models on dense text comprehension

- Scored an impressive 83.1 on visual math problem-solving (MathVista)

- Maintains elite language capabilities (C-Eval 86.80) while adding real-time speech generation

The results challenge long-held assumptions in AI development. "We've proven that breaking information into discrete units doesn't mean losing richness," notes Zhang. "If anything, it helps different modalities enhance each other."

Why This Changes Everything

Most current AI systems are essentially language models with sensory add-ons. LongCat-Next represents the first successful attempt to build perception directly into an AI's foundation:

- More natural interactions with robots and virtual assistants

- Better understanding of complex visual data like medical scans or engineering diagrams

- Potential for truly unified AI systems rather than collections of specialized tools

The team has open-sourced both the model and its visual tokenizer, inviting developers to explore applications from education to industrial automation.

Key Points:

- Native multimodality: Processes all input types through unified architecture

- Compact yet powerful: Advanced compression maintains detail despite small size

- Open-source availability: Lowers barrier for real-world implementation

- Performance leader: Outpaces specialized models across multiple benchmarks