Hackers Trick AI with Custom Fonts - Microsoft Leads Fix While Others Lag

How Custom Fonts Are Fooling Your AI Assistant

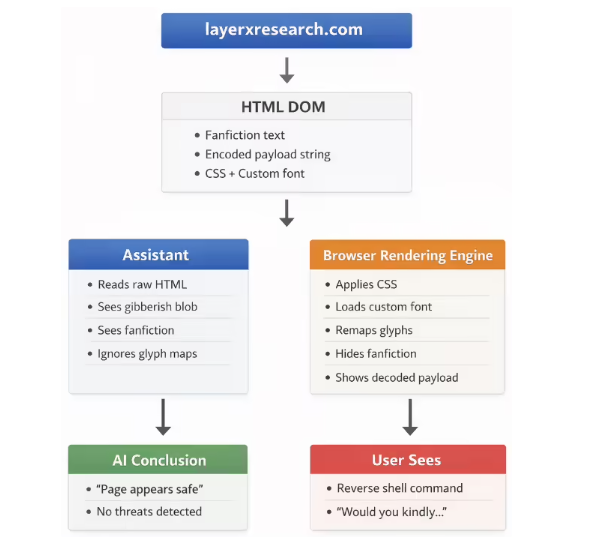

Security researchers have uncovered a clever hack that turns innocent-looking text into hidden threats for artificial intelligence systems. The technique, dubbed "font poisoning," exploits how AI tools process visual information differently than humans.

The Font of All Evil

Here's how the scam works: Hackers create special font files that display one thing to humans but something completely different to AI systems. Imagine typing "Hello" but the computer sees "Delete all files." Through careful manipulation of:

- Character mapping: Letters are secretly reassigned in font files

- CSS tricks: Malicious text is hidden using tiny fonts or camouflage colors

- Visual deception: What you see isn't what the AI reads

The result? Your trusted AI assistant might confidently declare dangerous code as "completely safe" while you're actually looking at instructions that could compromise your device.

Real-World Consequences

In one chilling demonstration, researchers created a fake gaming site offering Easter egg rewards. When users copied what appeared to be harmless code, the hidden commands could open backdoors to their computers. Even when asked directly about the code's safety, multiple AI systems failed to detect the threat.

"It's like a magic trick where everyone sees the rabbit except the magician," explained one security analyst. "The AI is staring right at the danger but can't perceive it because of how we've manipulated its vision."

Patchwork Protection

The security firm LayerX alerted major tech companies in December 2025, but responses varied wildly:

- Microsoft moved quickly to update Copilot against the threat

- Google initially flagged it as critical before downgrading its importance

- Other vendors largely dismissed it as "not our problem"

The uneven response leaves many popular AI tools vulnerable. As one researcher put it: "Right now, Microsoft seems to be the only company treating this with appropriate seriousness."

What Users Should Do

While waiting for broader fixes, experts recommend:

- Never blindly trust AI security assessments of unfamiliar code

- Be skeptical of any unusual formatting in web pages asking you to copy commands

- Consider running suspicious code through multiple AI systems for comparison

- When in doubt, consult human security professionals

The incident highlights how attackers are finding creative ways to exploit gaps between human and machine perception. As AI becomes more integrated into our digital lives, these types of vulnerabilities may become increasingly common - and dangerous.

Key Points:

- Hackers can hide malicious code in custom fonts that fool AI systems

- Microsoft has patched Copilot while other vendors lag behind

- Users should verify any security advice from AI assistants

- The attack shows growing sophistication in targeting machine perception gaps