Hackers Hijacking AI Agents Through Vulnerable Relay Stations

The Silent Threat in AI Communications

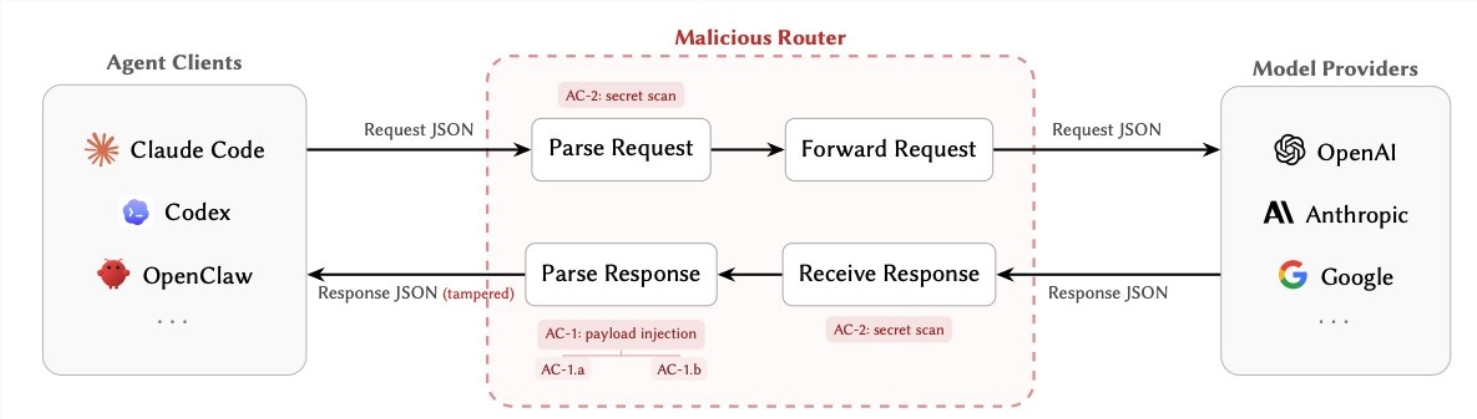

Security researcher Chaofan, previously known for exposing Claude's source code, has unveiled a critical vulnerability affecting AI agents worldwide. The groundbreaking paper "Your Agent Is Mine" reveals how third-party language model routers - commonly called "relay stations" - have become the weakest link in AI security.

How Attackers Exploit Relay Stations

Modern AI systems increasingly depend on these routing services to process requests. The danger? These intermediaries have complete access to all communications in plain text - including sensitive tool parameters, API keys, and even cryptocurrency credentials.

Chaofan's team identified two primary attack methods:

1. Payload Injection (AC-1): After the AI model responds, attackers can secretly alter tool instructions. Imagine asking your AI assistant to book a flight, only to have the request redirected to a hacker's server. This technique enables everything from simple data theft to full system takeovers.

2. Secret Theft (AC-2): Some routers passively scan traffic for valuable information. Your API keys, cloud service credentials, or crypto wallets could be copied without any visible signs of a breach.

What makes these attacks particularly dangerous is their conditional triggering. Hackers can program them to activate only after certain thresholds (like 50 requests) or when detecting specific commands like "YOLO" mode.

The Shocking Test Results

The research team examined 28 commercial routers and 400 free services with alarming findings:

- 9 routers actively injected malicious code

- 1 attack drained a test wallet of $5 million in Ethereum

- Over 2.1 billion token requests were processed through vulnerable systems

- 401 AI agent sessions operated with completely compromised security

"These aren't theoretical risks," Chaofan noted. "We're seeing active exploitation in the wild."

Why This Vulnerability Matters

Most AI security efforts focus on protecting models from prompt injections or managing tool permissions. Few consider the routing layer - yet it's where all communications must pass. When compromised, even the most secure AI systems become vulnerable.

The problem grows worse with unregulated free and low-cost relay services. Without proper oversight, these become ideal platforms for attackers to operate undetected.

Protecting Your AI Systems

For developers and companies using AI agents, the researchers recommend:

- Direct connections: Use official API endpoints whenever possible

- Encrypt everything: Implement end-to-end encryption and request signatures

- Monitor closely: Watch for unusual tool behavior and rotate API keys regularly

- Sandbox routers: Isolate any relay services you must use

As AI adoption accelerates, this discovery serves as a wake-up call. The very infrastructure enabling AI communication may be its greatest vulnerability.

Key Points

- Third-party AI routers expose critical security vulnerabilities

- Attackers can inject malicious code or steal sensitive data undetected

- Testing revealed active attacks including a $5 million crypto theft

- Free and low-cost relay services pose particular risks

- Developers should prioritize direct connections and enhanced security measures