Google's NotebookLM Now Understands Your Scribbles and Snapshots

Google's NotebookLM Gets Eyes: Image Understanding Arrives

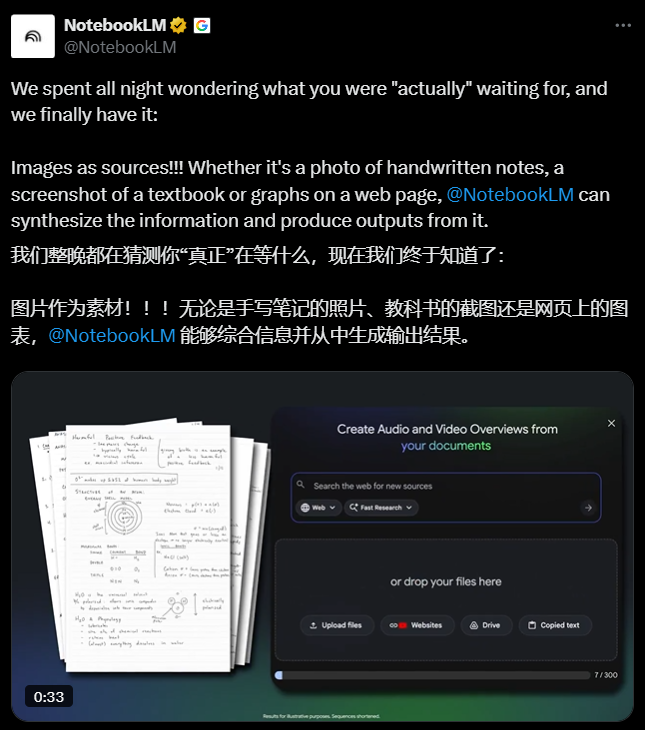

Ever snapped a photo of a whiteboard crammed with formulas, only to forget what they meant later? Google's NotebookLM just solved that problem. The AI-powered note-taking tool now understands images—from hastily scribbled lecture notes to textbook pages and even coffee shop menus.

How It Works

The upgraded system uses multimodal AI to perform optical character recognition (OCR) and semantic analysis on uploaded images. What sets it apart:

- Handwriting recognition that distinguishes between professors' scrawls and printed text

- Table extraction that preserves complex data structures

- Contextual linking that connects visual content with your existing notes

"Ask how the formula in the lower left corner was derived," suggests Google's demo, "and NotebookLM will not only find it but generate step-by-step explanations."

Real-World Magic

The implications are staggering:

- Students can photograph textbook pages and instantly query specific diagrams or values ("What does Figure 3.2 show about cell mitosis?")

- Researchers can capture conference whiteboards and later search by concept rather than trying to decipher handwriting

- Foodies can snap a restaurant menu abroad and ask "How spicy is the tom yum soup?"

The feature launched to overwhelming demand—educational accounts alone uploaded 500,000+ images in the first 48 hours, a 340% surge from previous usage patterns.

Privacy First Approach

While processing happens in the cloud initially, Google promises local processing options "in coming weeks" for sensitive materials. The company hasn't announced pricing plans yet—all image analysis currently uses existing free quotas.

Looking ahead, an AR glasses integration slated for 2026 could enable real-time "ask anything you see" functionality, potentially revolutionizing fieldwork across industries.

Key Points:

- 📸 NotebookLM now processes images through advanced OCR and AI analysis

- ✍️ Understands both printed materials and handwritten notes with context awareness

- 🔍 Enables natural language queries about visual content ("Explain this formula derivation")

- 🚀 Education sector adoption skyrocketing with 500K+ uploads in two days

- 🕶️ AR glasses integration coming next year for real-time visual queries