GitHub's New AI 'Rubber Duck' Helps Developers Spot Coding Mistakes

GitHub's AI 'Rubber Duck' Revolutionizes Code Review

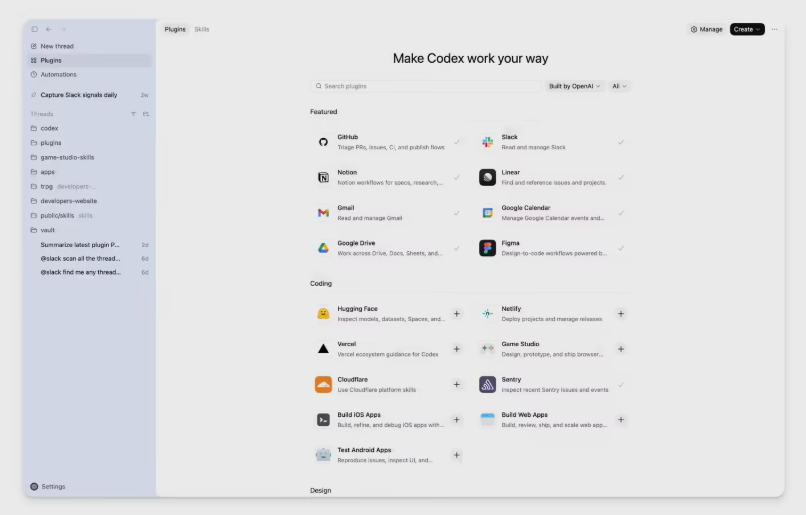

Microsoft's GitHub made waves this week with the launch of Rubber Duck, an experimental feature for its Copilot CLI that gives developers what every programmer needs - a fresh pair of eyes on their code. But these eyes come with artificial intelligence superpowers.

Why Programmers Need a Rubber Duck

The name comes from a common programming practice where developers explain their code line-by-line to an inanimate object (traditionally a rubber duck) to spot errors. GitHub's digital version takes this concept to the next level by using multiple AI models as your coding partners.

"Early mistakes in software development often snowball into bigger problems," explains GitHub's engineering team. "Traditional self-review doesn't always catch these because we're limited by our own perspectives and training biases."

How It Works: AI Tag-Team for Better Code

The system lets developers use Claude series models as the primary coder, then brings in GPT-5.4 for quality control. This cross-model approach provides diverse perspectives that can catch:

- Architectural logic flaws

- Loop coverage errors

- Cross-file conflicts

Benchmark tests using SWE-Bench Pro showed remarkable results. When Claude Sonnet 4.6 worked with Rubber Duck, it closed 74.7% of the performance gap that existed when working alone. For complex tasks, the improvement was even more impressive at 3.8% above baseline.

Flexible Review Options Fit Any Workflow

Developers can choose from three review styles:

- Active mode: The system automatically requests reviews at critical moments like planning stages or complex implementations

- Passive mode: Triggers reviews when the system detects potential issues

- On-demand: Developers can request a review anytime they feel uncertain

Each review comes with clear feedback and explanations for suggested changes, making it easy to understand and implement improvements.

Getting Started with Your AI Coding Partner

The experimental feature is available now through GitHub Copilot CLI. Developers can activate it by simply running the /experimental command to start benefiting from this collaborative AI approach.

Key Points:

- 🤖 AI tag-team: Combines Claude and GPT models for comprehensive code reviews

- 🎯 74.7% performance boost: Significantly reduces coding errors compared to solo work

- ⚡ Flexible integration: Works automatically or on-demand to fit any development style

- 🔧 Easy activation: Available now in GitHub Copilot CLI's experimental features