Fish Audio S2 Brings Emotional Depth to AI Voices

Fish Audio S2: The Emotional Revolution in Text-to-Speech

The world of synthetic voices just got more expressive. Fish Audio's newly released S2 model represents a quantum leap in text-to-speech technology, putting nuanced emotional control directly into users' hands.

Fine-Tuned Feelings

What sets S2 apart is its granular approach to vocal emotion. Want your AI narrator to chuckle mid-sentence? Simply insert [laugh]. Need whispered urgency? Try [whispers]. The system even understands descriptive prompts like [professional broadcast tone] or [pitch up], adjusting delivery word by word.

"We're not just synthesizing speech—we're crafting personality," explains the development team behind this open-source breakthrough.

Technical Triumphs

The numbers behind S2 impress:

- 4.4 billion parameters in its flagship Pro version

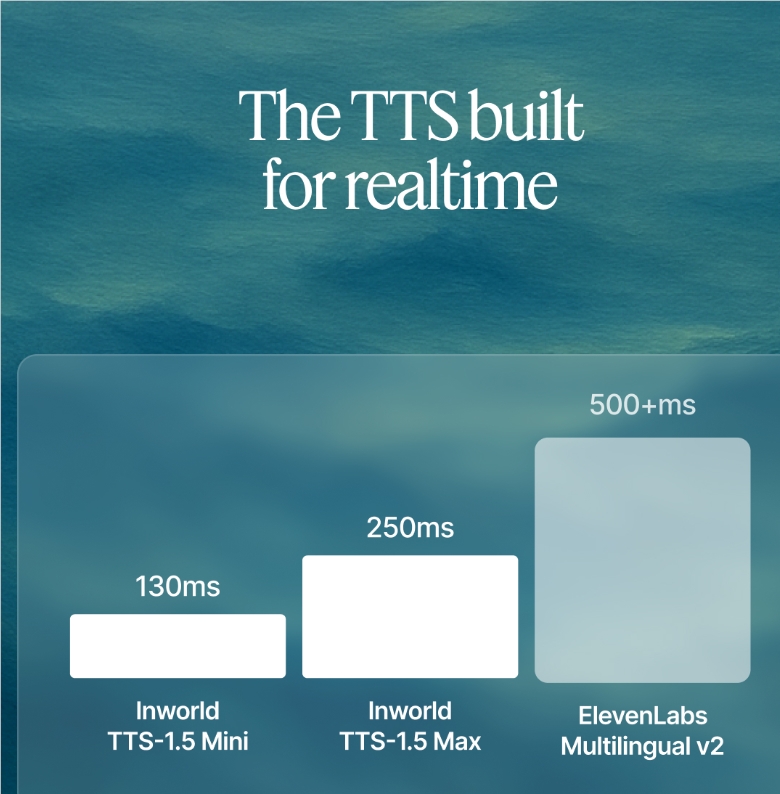

- Under 150ms latency enables real-time conversations

- Multi-speaker handling maintains voice consistency during dialogues

- 10 million training hours across 50 languages

Unlike previous models requiring post-processing for emotional effects, S2 bakes expressiveness directly into its architecture through reinforcement learning and dual autoregressive design.

Open Access Philosophy

In a refreshing move, Fish Audio has released everything:

- Model weights on GitHub

- Fine-tuning code

- Streaming inference engine via SGLang

- Hosted versions on Hugging Face

This transparency allows developers worldwide to build upon their work rather than treating advanced TTS as proprietary magic.

Practical Applications Await

The implications stretch far beyond novelty:

- Virtual assistants that actually sound engaged

- Audiobook narration with dramatic range

- Gaming characters whose emotions evolve naturally

- Accessibility tools conveying tone alongside words

The era of flat, robotic voices may finally be ending—one emotionally charged syllable at a time.