Douyin Assistant Denies Security Flaws Amid Online Controversy

Douyin Assistant Faces Security Allegations

The popular mobile assistant from ByteDance finds itself at the center of a cybersecurity storm. Recent online discussions have raised eyebrows about potential vulnerabilities in the Douyin Assistant platform, prompting an official response from the company.

Official Statement Addresses Concerns

On February 27, 2026, the Douyin team broke their silence regarding what they describe as "malicious hype" surrounding their product. In a carefully worded statement, company representatives dismissed the allegations as "a typical case of black PR activities."

"We've established proper channels for security reporting," a spokesperson explained, "but to date we haven't received any credible vulnerability reports concerning Douyin Assistant." The company maintains strict compliance with China's "Regulations on the Management of Security Vulnerabilities in Network Products," warning that unauthorized public disclosure of alleged flaws may violate these rules.

Examining the Viral Demonstrations

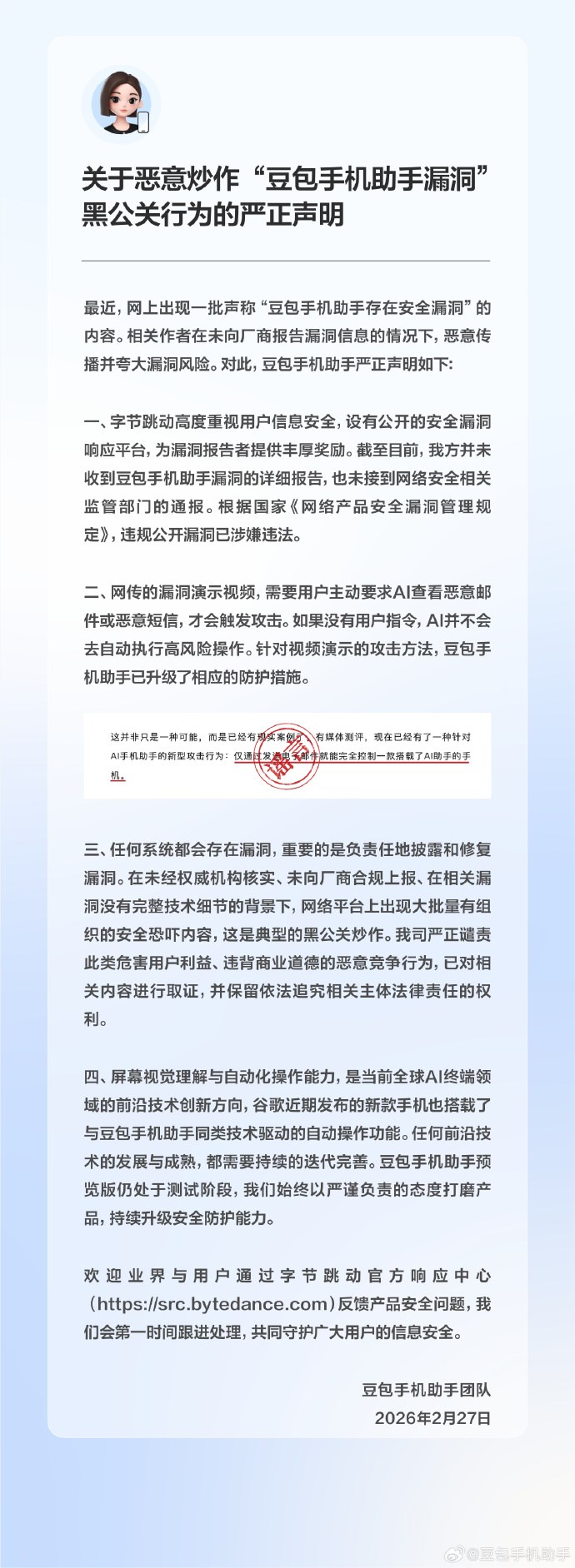

The controversy stems from videos circulating online that appear to show potential security issues. However, Douyin's technical team offers important context:

- User initiation required: All demonstrated actions require explicit user commands

- No autonomous risk-taking: The AI won't perform sensitive operations without direct instruction

- Protections already upgraded: The company claims to have addressed the specific methods shown

Legal Action Considered Against "Safety Panic"

The statement takes a particularly strong stance against what it calls "organized safety panic content" spread while alleged vulnerabilities remain unverified. ByteDance alleges this constitutes improper black PR tactics and confirms it has preserved evidence for potential legal action.

Cutting-Edge Technology Comes With Challenges

The controversy highlights broader questions about AI assistants' evolving capabilities:

Screen understanding and automated operations represent frontier technologies being adopted by smartphone manufacturers worldwide. As these features become more sophisticated, they inevitably attract both legitimate scrutiny and potential misuse.

The Douyin team emphasizes their product remains in testing phase, with continuous improvements planned based on user feedback and security assessments.

Key Points:

- Douyin Assistant denies existence of unaddressed security flaws

- Company calls online reports "black PR" and threatens legal action

- Viral demonstrations require active user participation

- Protective measures allegedly upgraded against shown methods

- Technology represents emerging standard in smartphone assistants