DeepSeek-V4 Arrives: A Game-Changer in AI with Million-Word Memory

DeepSeek-V4 Breaks New Ground in AI Capabilities

The AI landscape just got more interesting with DeepSeek's latest release. Their V4 model isn't just another incremental update - it's bringing capabilities we've only seen in premium, closed systems to the open-source community.

Two Models, One Mission

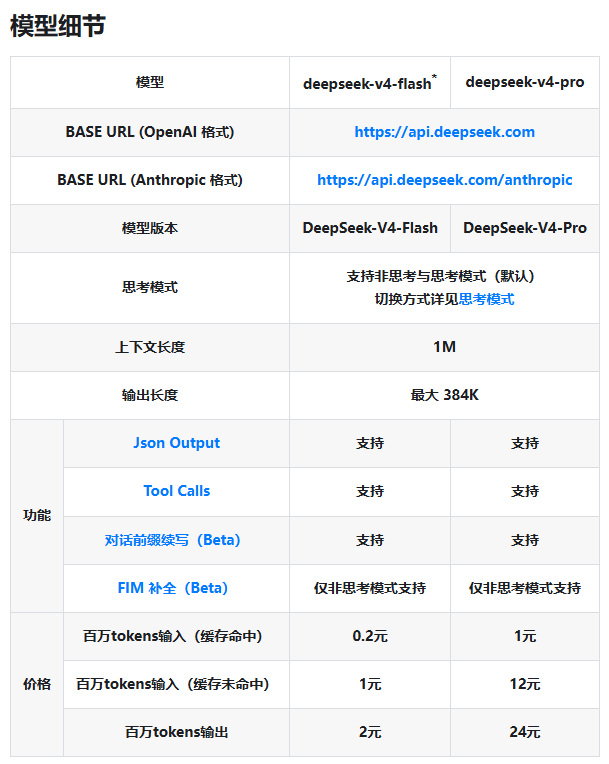

DeepSeek-V4 arrives in two distinct versions tailored for different needs:

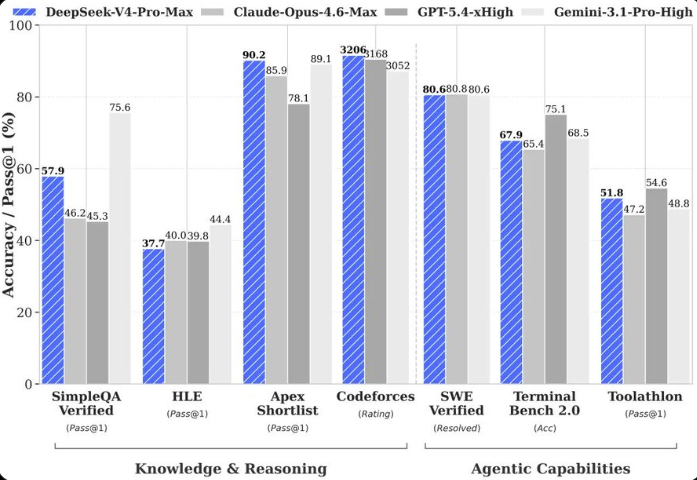

- The Brainiac (Pro Version): Packing 1.6T parameters (with 49B active), this powerhouse matches top closed-source models. It's particularly impressive in coding tasks, coming close to Opus4.6's performance, while outperforming all open-source competitors in math and STEM evaluations.

- The Speedster (Flash Version): With 284B parameters (13B active), this leaner model delivers surprising punch. While its knowledge base isn't as vast as the Pro's, it keeps up in reasoning for simpler tasks and Agent performance - all while being easier on your wallet.

The Secret Sauce: DSA Mechanism

The real magic lies in DeepSeek's innovative DSA sparse attention architecture. This breakthrough tackles one of AI's toughest challenges - making long-context processing practical rather than prohibitively expensive. By compressing at the token level, the system dramatically cuts down on computational and memory demands.

"This isn't just about technical specs," explains an industry analyst familiar with the release. "Making 1M context standard across their services removes a major barrier for developers working with large documents or complex multi-step processes."

Built for Today's AI Ecosystem

Recognizing how AI is actually being used, DeepSeek has fine-tuned V4 specifically for Agent applications like Claude Code and CodeBuddy. The model offers flexible thinking modes - from quick responses to deep analysis - controllable via an API parameter called reasoning_effort. This granular control could be a game-changer for coding and document-heavy workflows.

Open For Business (And Tinkering)

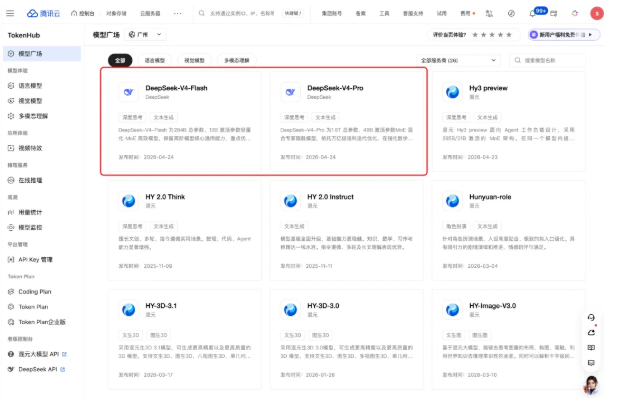

The preview is already live on DeepSeek's official platforms, with APIs updated to support the new capabilities. Notably, older model names will be phased out by July 2026.

For developers eager to dive deeper:

- Model weights are available on Hugging Face and Moba Community

- Technical documentation has been published alongside the release

This launch doesn't just advance DeepSeek's position - it demonstrates that open-source models can compete with the best proprietary systems in critical areas like long-context understanding and Agent functionality.

Key Points:

- Dual offering balances top-tier performance with cost efficiency

- DSA mechanism makes million-word context practical for everyday use

- Agent optimization includes adjustable thinking intensity via API controls

- Full open-source availability accelerates community innovation