Critical Flaw in AI Protocol Leaves Thousands of Servers Vulnerable

Widespread Security Threat Emerges in AI Infrastructure

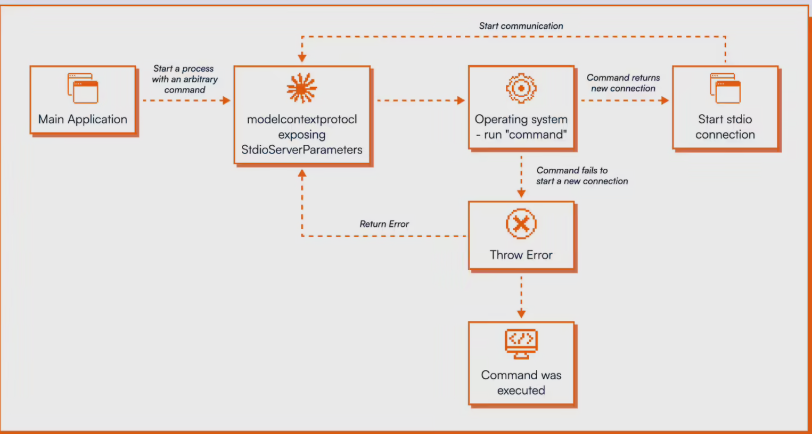

The AI development community is facing a serious security crisis. OX Security's recent report reveals that Anthropic's Model Context Protocol (MCP), a widely adopted standard for connecting AI models to external tools, contains dangerous design flaws that could allow attackers to take remote control of servers.

What makes this vulnerability so dangerous? Unlike typical coding errors that can be patched, this is an architectural flaw in the protocol's STDIO interface. The system blindly executes operating system commands without verification - even when server startup fails. "This isn't a bug you can just fix with a security update," explains OX Security's lead researcher. "It's baked into how the protocol fundamentally works."

The Scope of the Problem

Every one of the 11 programming language implementations officially supported by MCP carries this vulnerability. From Python to Rust, developers using these tools inherit the risk automatically. OX Security's tests showed frightening results:

- Attackers could gain full control of LangFlow instances without credentials

- Letta AI servers proved vulnerable to man-in-the-middle attacks

- Flowise's whitelist protections were easily bypassed

- Windsurf IDE users faced drive-by attacks from simply visiting malicious sites

Perhaps most concerning? When researchers submitted their findings to Anthropic back in January, the company initially dismissed the issue as "expected behavior." Their eventual response - a documentation update warning developers to "use caution" with the STDIO adapter - failed to address the core problem.

Marketplace Vulnerabilities Compound the Risk

The situation grows worse when you consider how easily malicious code can spread through MCP's ecosystem. Researchers tested 11 major marketplaces by uploading compromised servers. Nine accepted them without any security review - only GitHub's registry caught the dangerous submissions.

Industry experts are sounding the alarm. "This isn't just about one company's protocol," warns cybersecurity analyst Maria Chen. "MCP has become foundational infrastructure for AI development. That makes this a systemic risk for the entire field."

With no comprehensive fix in sight, developers are left scrambling for workarounds while attackers surely take notice of this golden opportunity.

Key Points:

- 200,000+ servers vulnerable due to MCP protocol flaw

- All 11 programming language implementations affected

- Remote code execution possible without authentication

- Marketplace safeguards largely ineffective

- Anthropic has not fixed the fundamental architecture issue