Baidu Qianfan Rolls Out AI Coding Subscription Service with Multi-Model Support

Baidu Qianfan Launches AI-Powered Coding Subscription Service

Baidu's cloud AI platform Qianfan made waves yesterday with the launch of its Coding Plan, a subscription service that promises to revolutionize how developers interact with AI coding assistants. This isn't just another tool—it's a comprehensive ecosystem designed to support programmers through every stage of development.

Powerhouse Model Integration

The real game-changer? Coding Plan brings together several leading AI coding models under one roof. Developers can now access GLM-4.7, DeepSeek-V3.2, and other top performers without the headache of managing multiple API connections or environment configurations.

"We've eliminated the switching costs," explains a Baidu spokesperson. "One console gives you instant access to different models' strengths—whether you need creative solutions or bulletproof syntax."

Plug-and-Play Compatibility

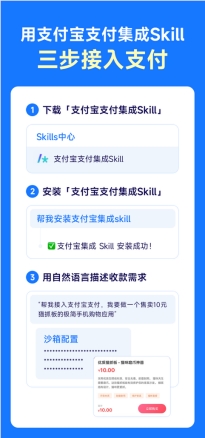

The service shines in its seamless integration with existing workflows:

- Direct compatibility with Claude Code and Cursor

- Support for OpenAI and Anthropic protocols

- Standardized interfaces requiring minimal setup

Developers won't need to overhaul their current toolsets—just connect and start coding smarter.

Flexible Pricing That Scales With You

The subscription options cater to everyone from solo coders to enterprise teams:

| Plan | Requests/Month | Best For |

|---|

The introductory offer lets newcomers explore all Lite features for just ¥9.9—a smart move that lowers the barrier to entry.

Why This Matters Now

The timing couldn't be better as:

- Demand for AI-assisted development continues soaring

- Developers increasingly prefer consolidated solutions over fragmented tools

- Businesses seek predictable pricing models for budgeting purposes

The generous call quotas address a common pain point—running out of credits mid-project—making this particularly appealing for large-scale coding tasks.

Key Points:

- Multi-model access: Switch between GLM-4.7, DeepSeek-V3.2 etc.

- Tool compatibility: Works seamlessly with Claude Code, Cursor

- Scalable plans: From hobbyist (18K requests) to pro (90K requests)

- Special offer: First month trial at ¥9.9