Apple's Secret Sauce: How a Tuned Open-Source Model Outperformed GPT-5 in UI Design

Apple's UI Breakthrough: When Small Models Outsmart Giants

In a development that challenges conventional wisdom about AI scalability, Apple's research team has demonstrated how carefully tuned open-source models can outperform even the most advanced large language models in specialized tasks. Their latest focus? The notoriously subjective world of user interface design.

The UI Design Challenge

Ask any developer about their biggest headaches, and UI design consistently ranks near the top. While AI-generated code has made impressive strides, it often stumbles when creating visually appealing interfaces. The reason lies in the limitations of traditional reinforcement learning from human feedback (RLHF).

"Current methods are like trying to teach art by only saying 'I don't like this' without explaining why," explains one researcher involved in the project. "AI needs more nuanced guidance to develop what we might call 'on-point aesthetics.'"

Bringing in the Experts

Apple's solution was both simple and revolutionary: instead of relying on massive datasets of generic feedback, they engaged 21 seasoned design professionals who didn't just rate designs but actively participated in improving them. These experts:

- Provided detailed written critiques

- Created modification sketches

- Directly edited code examples

The team collected 1,460 of these expert annotations, each containing deep logical reasoning about design choices, then built a specialized reward model based on this curated feedback.

Surprising Results with Limited Data

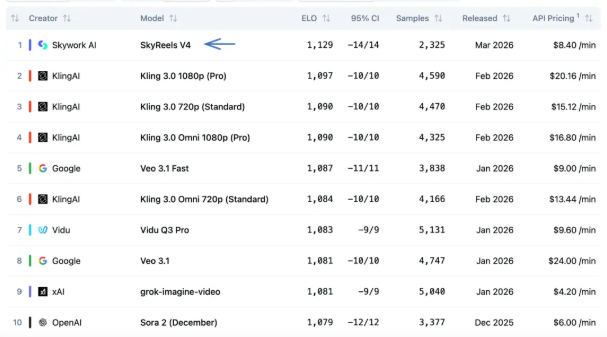

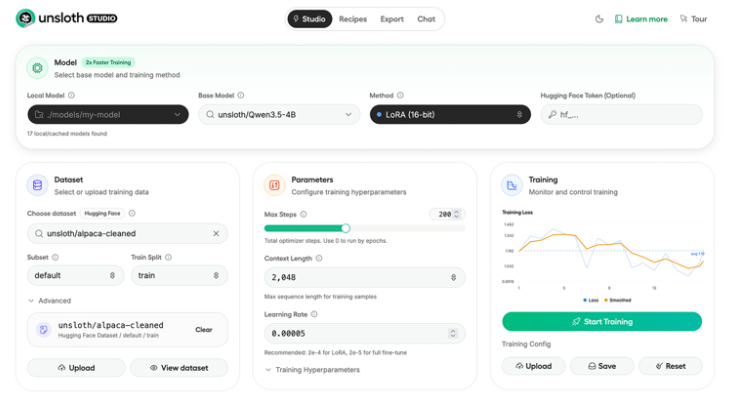

The outcome defied expectations. By fine-tuning their model with just 181 high-quality "sketch feedbacks," Apple's researchers achieved what seemed impossible - their optimized Qwen3-Coder surpassed GPT-5's performance in generating app interfaces.

"This isn't about having more data," notes the research paper. "It's about having the right data. Expert-level feedback proved exponentially more valuable than mountains of generic input."

The study also revealed fascinating insights about design perception:

- Agreement between professionals and non-designers on UI quality: just 49.2% (essentially random)

- Consistency when designers provided sketch-based feedback: jumped to 76.1%

What This Means for Developers

The implications are profound for both AI development and practical application:

- Specialization beats scale: Carefully tuned smaller models can outperform general-purpose giants in specific domains

- Human expertise matters: Even in the AI era, professional insight provides irreplaceable value

- The future of design tools: Instead of guessing preferences, AI could soon understand visual language through sketch-based interaction

With Apple potentially integrating this technology into Xcode, we might be closer than ever to truly intuitive app development where "describe what you want" becomes enough to generate polished interfaces.

Key Points:

- Quality over quantity: 181 expert annotations outperformed massive generic datasets

- Sketch-based feedback increased designer-AI alignment by over 50%

- Smaller models can excel when properly tuned for specific tasks

- UI design subjectivity quantified: professionals and users often disagree

- Future tools may use visual language understanding rather than trial-and-error