Anthropic's New AI Tool Cleans Up After 'Vibe Coding' Spree

The Double-Edged Sword of AI Coding

Developers riding the 'vibe coding' wave - generating reams of code through natural language instructions - are discovering an inconvenient truth: speed comes at a cost. The very AI tools accelerating development are creating new quality control headaches, with logical gaps and security vulnerabilities slipping through at alarming rates.

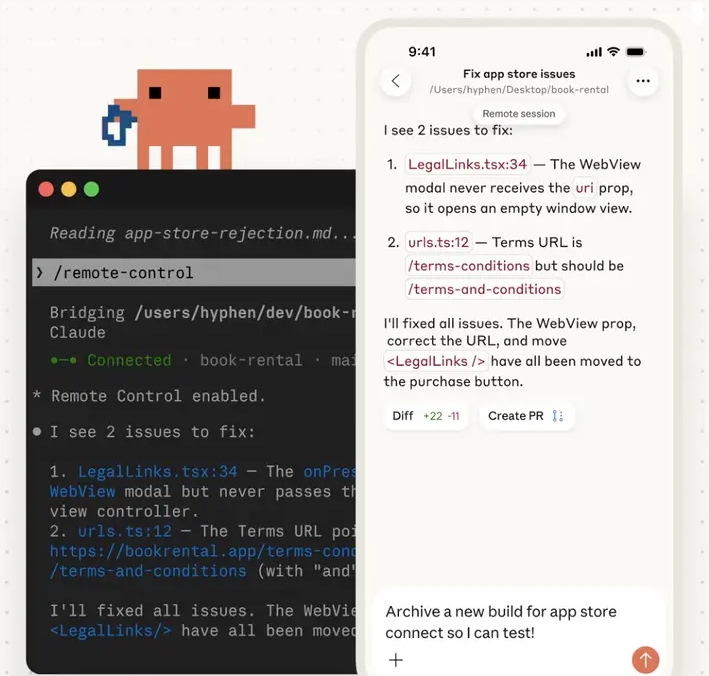

Enter Anthropic's new Code Review tool, launching today as part of Claude for Teams and Enterprise packages. Think of it as a digital code detective that doesn't just check style guides, but actually understands what your code is trying to accomplish - and where it might fail spectacularly.

Why Manual Reviews Can't Keep Up

"We're seeing pull request volumes explode," explains Cat Wu, Anthropic's product lead. "Human reviewers are drowning in AI-generated code, often missing subtle but dangerous flaws because they're overwhelmed by volume."

The solution? An AI system that works like an entire engineering team distilled into software:

- Logic X-Ray: Goes beyond surface-level syntax to catch flawed algorithms before they cause midnight outages

- Risk Radar: Color-coded alerts (red for 'drop everything', yellow for 'watch this', purple for 'legacy code ghosts')

- Swarm Intelligence: Multiple specialized AI agents debate your code's merits before presenting consolidated findings

- GitHub Integration: Leaves detailed comments explaining not just what's wrong, but why it matters and how to fix it

Who's Buying This Digital Insurance?

Early adopters read like a who's who of tech: Uber preventing ride-hailing glitches, Salesforce securing customer data pipelines, Accenture auditing client projects. At $15-$25 per review (cheaper than most developer hourly rates), the math becomes compelling for any team shipping critical code.

The real question isn't whether companies will adopt these tools - it's how long before AI code review becomes as standard as spellcheck. In the arms race between AI-assisted creation and AI-powered quality control, Anthropic just fired a significant shot.

Key Points:

- Anthropic launches Code Review to address quality issues in AI-generated code

- Tool focuses on deep logic flaws rather than superficial style issues

- Uses multi-agent architecture to analyze code from multiple perspectives

- Already deployed at major firms including Uber and Salesforce

- Priced at $15-$25 per review, positioned as essential QA for AI-driven development