Ant Group's Ling-mini-2.0 Boosts AI Speed and Performance

Ant Group's Ling-mini-2.0 Revolutionizes AI Efficiency

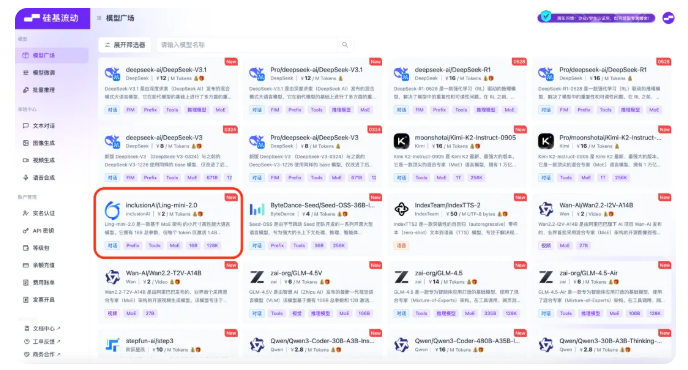

Silicon-Based Flow's large model service platform has unveiled Ling-mini-2.0, the latest open-source AI model developed by Ant Group's BaiLing team. This breakthrough demonstrates exceptional generation speed without compromising performance, representing a significant advancement in efficient AI architecture.

Technical Innovations

The model employs a sophisticated Mixture of Experts (MoE) architecture comprising 16 billion total parameters. However, its revolutionary design activates merely 1.4 billion parameters per token during generation - a technical achievement that dramatically improves processing speed while maintaining competitive performance against both:

- Traditional dense language models (300 tokens/second for sub-2000 token queries (2x faster than traditional 8B dense models)

- Achieves up to 7x acceleration with longer outputs

The Silicon Flow platform complements this technological innovation with comprehensive developer resources:

- Multiple access schemes

- Detailed API documentation

- Model comparison tools ## Key Points:

- 🧠 Efficient Architecture: 16B total parameters with only 1.4B activated per token

- 🚀 Extended Context: Supports up to 128K tokens for complex applications

- 💻 Developer Friendly: Platform offers free APIs and comparison tools