Alibaba's New AI Model Packs Big Brain Power in a Compact Package

Alibaba's Programming Powerhouse Goes Open-Source

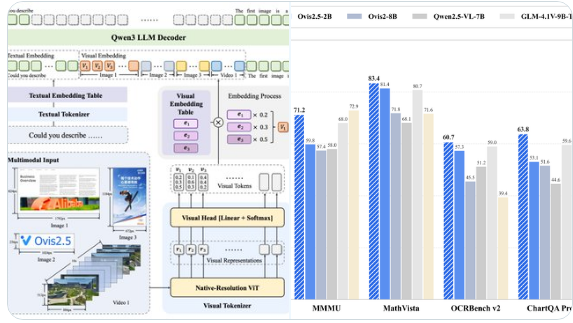

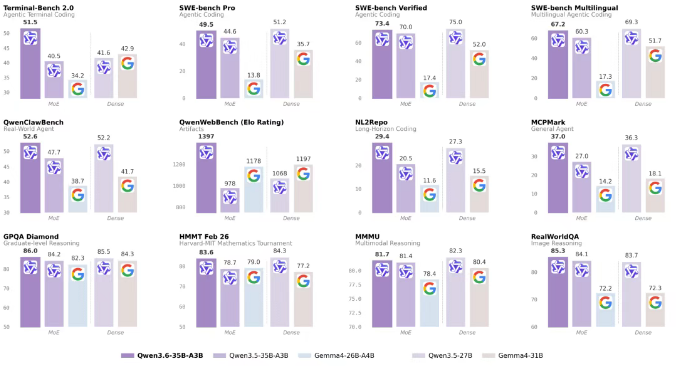

In a move that could democratize advanced AI development, Alibaba's Qwen team has released Qwen3.6-35B-A3B - their most capable open-source model yet. What makes this April 16 release special isn't just its raw power, but how efficiently it delivers that power.

Small Footprint, Giant Leaps

The secret sauce? A sparse mixture-of-experts (MoE) architecture that activates just 3 billion of its 35 billion parameters at any time. Think of it like having a team of specialists where only the right experts chime in for each task. This approach lets the model punch above its weight, outperforming both its predecessor (Qwen3.5-27B) and even matching larger models like Gemma4-31B in logical reasoning.

"What's exciting here isn't just the benchmark numbers," observes AI researcher Dr. Li Ming (not affiliated with Alibaba). "It's that we're seeing this level of performance without the computational hunger of traditional dense models."

Beyond Coding: A Multimodal Marvel

While programming prowess grabs headlines, Qwen3.6-35B-A3B flexes impressive visual muscles too. Scoring 92.0 on RefCOCO spatial intelligence tests, it approaches the capabilities of premium models like Claude Sonnet4.5. This means the model doesn't just write code - it understands visual context in ways that could revolutionize design tools and multimedia applications.

Developers can already access the model through Qwen Studio or Alibaba Cloud's BaiLian platform (as qwen3.6-flash). Notably, it plays well with popular programming assistants including OpenClaw and Claude Code, thanks to its preserve_thinking feature that maintains context during complex tasks.

Why This Matters Now

As AI moves toward edge computing and smarter automation, efficiency becomes paramount. Qwen3.6-35B-A3B arrives precisely when developers need lightweight yet powerful tools for next-gen applications. Its open-source nature could accelerate innovation in areas from automated testing to AI pair programming.

Key Points:

- 35B parameter model activates just 3B at a time

- Outperforms larger dense models in programming tasks

- Scores 92.0 on RefCOCO visual intelligence tests

- Now available via Alibaba Cloud with API support

- Open-source release could spur AI development

This isn't just another model release - it's proof that in AI, sometimes less (activated parameters) really can be more (capability). As developers get their hands on Qwen3.6-35B-A3B, we're likely to see creative applications that push the boundaries of what 'lite' AI can do.