Alibaba's New AI Model Packs a Punch with Smarter, Leaner Programming

Alibaba Takes AI Efficiency to New Heights with Qwen3.6 Release

In a move that could reshape how developers work with artificial intelligence, Alibaba's Qwen team unveiled their latest open-source model on April 16, 2026. The Qwen3.6-35B-A3B isn't just another large language model—it's a smart, efficient powerhouse that delivers top-tier performance without the usual computational bulk.

Small Footprint, Big Results

What makes this release stand out? The numbers tell an impressive story. While the model boasts 35 billion parameters total, its innovative sparse mixture-of-experts (MoE) architecture means it only activates about 3 billion parameters during actual use. That's like having a sports car that only burns fuel when you hit the accelerator.

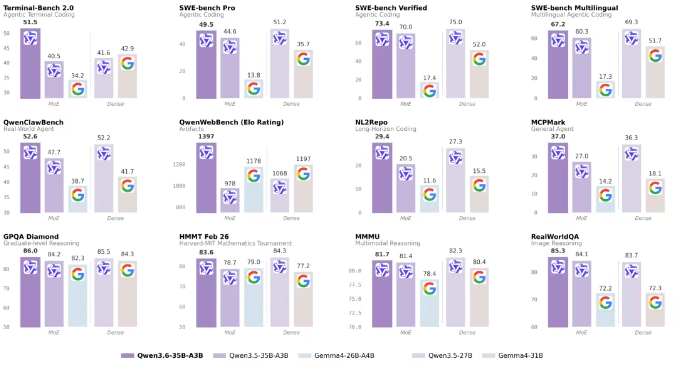

Performance-wise, it outshines its 27-billion-parameter sibling Qwen3.5-27B while using far less computing power. Even more notably, it leaves its predecessor Qwen3.5-35B-A3B in the dust, matching the capabilities of much larger models like Gemma4-31B when it comes to complex reasoning and multi-agent collaboration.

Beyond Text: A Multimodal Marvel

The model isn't just about processing words. As a fully multimodal system, Qwen3.6-35B-A3B demonstrates remarkable spatial intelligence and visual perception skills. Its RefCOCO score of 92.0 puts it in elite company, with some capabilities rivaling Anthropic's Claude Sonnet4.5.

Developers can already access this technology through Qwen Studio and Alibaba Cloud's BaiLian platform via the qwen3.6-flash API. The service includes handy features like preserve_thinking for maintaining thought chains, and plays nicely with popular programming assistants including OpenClaw, Claude Code, and Qwen Code.

Why This Matters Now

As demand grows for AI that can run efficiently on edge devices and power automated agents, Alibaba's release hits a sweet spot. It offers the performance developers need without the power hunger that often comes with large models. This could be the beginning of a shift toward more practical, sustainable AI solutions that don't sacrifice capability for efficiency.

Key Points:

- Efficient architecture: Only activates 3B of its 35B parameters during use

- Strong performance: Outperforms larger models at lower computational cost

- Visual intelligence: Scores 92.0 on RefCOCO, rivaling top competitors

- Ready to use: Available now through Alibaba Cloud services

- Future potential: Could redefine expectations for lightweight AI models