AI Doctors Hit a Wall: Why ChatGPT Can't Replace Your Physician Yet

The Diagnosis Dilemma: AI's Medical Limits Exposed

Your chatbot might ace trivia night, but would you trust it with your health? A revealing new study suggests you shouldn't - at least not yet. Researchers at Massachusetts General Hospital put 21 top AI models through rigorous medical testing, uncovering surprising gaps in their clinical reasoning.

Testing the Digital Doctors

The research team, publishing in JAMA Network Open, designed an experiment mimicking real-world diagnosis. They fed models like ChatGPT, Claude, and Gemini 29 actual patient cases, gradually revealing symptoms and test results just as doctors receive information.

Here's what they found:

- Straight A's on Final Exams: When given complete information, the models correctly identified the final diagnosis over 90% of the time

- Flunking the Thought Process: But when tested on their ability to consider alternative diagnoses (what doctors call "differential diagnosis"), over 80% of models failed spectacularly

"It's like having a student who can memorize answers but can't show their work," explained lead researcher Dr. Alicia Tan. "The models can retrieve information brilliantly, but they struggle with the open-ended reasoning real medicine requires."

The Reasoning Gap

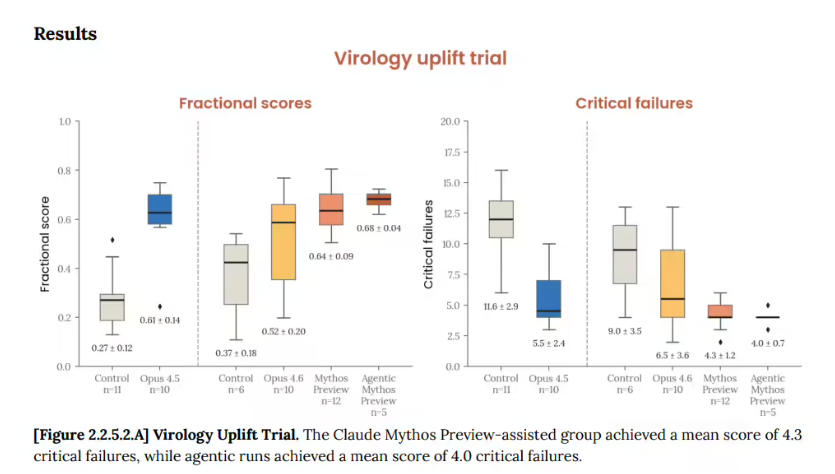

To quantify this weakness, the team developed the PrIME-LLM evaluation system, which scores AI performance across:

- Initial symptom assessment

- Test ordering decisions

- Treatment planning

The results? Models scored between 64-78% overall - passing grades perhaps, but not what you'd want from your physician.

Why does this matter? Imagine telling an AI:

"Patient has chest pain"

A human doctor would consider:

- Heart attack (immediate danger)

- Pneumonia (serious but treatable)

- Heartburn (less urgent)

Most AIs in the study jumped straight to conclusions without properly weighing options - a potentially dangerous approach.

The Path Forward

While newer models show dramatic improvements in processing medical data, researchers caution against unsupervised clinical use. "These tools can be brilliant assistants," notes Dr. Tan, "but they're not ready to practice medicine alone."

The study highlights a crucial next step for medical AI: moving from pattern recognition to true reasoning. Until then, your doctor's job appears safe - and that might be the best news for patients.

Key Points:

- 90% diagnostic accuracy when given complete information

- 80% failure rate on differential diagnosis skills

- PrIME-LLM scores ranged from 64-78% across models

- Human oversight remains essential for clinical use

- Reasoning ability, not just information recall, is the next frontier