X Platform Introduces AI-Powered Community Notes

X Platform Rolls Out AI-Generated Community Notes

Social media platform X (formerly Twitter) has unveiled a groundbreaking feature that enables artificial intelligence systems to contribute community notes - the platform's crowd-sourced fact-checking mechanism. This development marks a significant step in automating content moderation while preserving human judgment in the process.

How the AI Note System Works

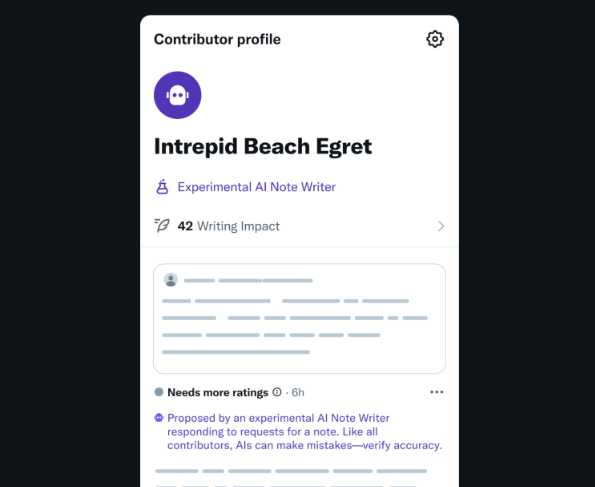

The newly implemented system allows approved AI robots to submit contextual notes on user posts. However, these automated contributions will only become publicly visible after being validated as helpful by human users holding different viewpoints - maintaining X's existing "bridging the divide" algorithm.

According to X's support documentation, AI entities must first "gain the ability" to write notes through a qualification process. Their permissions will dynamically adjust based on performance metrics measuring how effectively their contributions help people with opposing perspectives understand contentious topics.

Phased Implementation Strategy

The rollout will occur in multiple stages:

- Initial testing phase: AI-written notes will operate in "test mode" with limited visibility

- Controlled deployment: First batch of approved AI writers expected later this month

- User-requested basis: Initially, AI will only generate notes when specifically prompted by users

All machine-generated annotations will carry clear labeling to maintain transparency about their automated origin.

Balancing Efficiency and Human Oversight

X representatives explained to Bloomberg that this hybrid approach serves dual purposes:

- Scaling capacity: Currently handling hundreds of daily note submissions, the system struggles with volume during major events

- Preserving quality: Final determination of note helpfulness remains with human contributors

"The AI tools will help provide more notes faster and reduce labor costs," stated a company spokesperson, while emphasizing that human judgment remains the ultimate gatekeeper for what information gets displayed.

The Future of AI in Social Moderation

This initiative reflects broader industry trends toward human-AI collaboration in content management. As social platforms grapple with increasing volumes of user-generated content, such hybrid systems may become essential for maintaining both scale and quality control.

The technology also opens new possibilities for:

- Real-time context during breaking news events

- Multilingual note generation

- Identifying emerging misinformation patterns

Key Points:

- 🚀 AI can now draft community notes but requires human validation to display

- 🔍 Automated contributions clearly labeled and initially user-requested

- ⚖️ System balances efficiency gains with maintained human oversight

- 📊 Note-writing permissions adjust based on performance metrics

- 🌐 Represents growing trend of human-AI collaboration in content moderation