OpenAI Introduces Parental Controls for ChatGPT Safety

OpenAI Enhances ChatGPT with Parental Controls and Safety Features

OpenAI has unveiled a new security routing system and parental control features for its ChatGPT platform, marking a significant step toward safer AI interactions. The updates aim to address vulnerabilities in handling sensitive topics while providing parents with tools to monitor their children's AI usage.

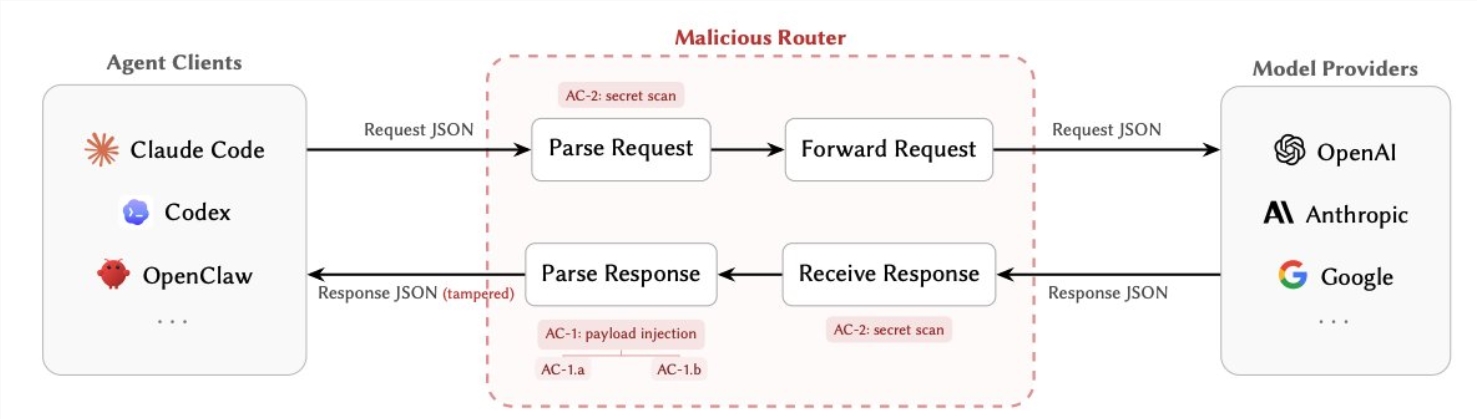

New Security Routing System

The core of the update is a security routing mechanism that detects emotionally charged or harmful conversations. When triggered, the system automatically switches to OpenAI's GPT-5 model, which includes a "safe completion" feature designed to provide balanced responses instead of outright refusals. This shift aims to reduce instances of "AI delusion," where models overly accommodate risky prompts.

Nick Turley, head of ChatGPT applications, clarified on social media that model switching is temporary, and users can check which model is active at any time. While many experts applaud the initiative, some users argue the changes treat adults like children.

Parental Control Features

The newly introduced parental controls allow guardians to:

- Set quiet hours for teen accounts.

- Disable voice mode and memory functions.

- Block image generation capabilities.

- Enable additional content filters to minimize exposure to violence or self-harm-related material.

If potential harm is detected, OpenAI's team will review flagged content and notify parents via email, SMS, or push notifications—unless opted out. The company is also exploring direct emergency service alerts if guardians cannot be reached.

Mixed Reactions

The rollout has sparked debate:

- Supporters: Parents welcome enhanced oversight tools.

- Critics: Some fear these measures could extend to adult accounts unnecessarily.

OpenAI acknowledges discomfort but emphasizes prioritizing safety during the 120-day improvement window following launch.

Key Points:

- 🛡️ Enhanced safety: GPT-5’s "safe completion" reduces harmful outputs.

- 👨👩👧 Parental oversight: Customizable controls for teen accounts.

- ⚠️ Emergency protocols: Systems alert parents—and potentially authorities—to risks.