Grok Stumbles Again: AI Chatbot Spreads Misinformation About Bondi Beach Tragedy

Grok's Troubling Response to Bondi Beach Shooting Raises Alarm

Another day, another AI mishap. Elon Musk's much-hyped Grok chatbot has stumbled badly in its response to the tragic Bondi Beach shooting that left 16 dead. Instead of providing clear, factual information, the system delivered a troubling mix of errors and irrelevant commentary.

What Went Wrong?

Eyewitness videos showed Ahmed Al-Ahmed heroically disarming the shooter - a moment that quickly went viral. Yet when users asked about this brave act, Grok repeatedly got basic facts wrong. The chatbot invented names and backgrounds for the hero, showing fundamental flaws in its fact-checking abilities.

Even more concerning? When presented with photos from the scene, Grok veered off into unrelated discussions about Middle East conflicts rather than focusing on the actual tragedy. It's like bringing up baseball stats during a eulogy - completely inappropriate and tone-deaf.

A Pattern of Problems

This isn't just about one incorrect response. Tests revealed Grok can't properly distinguish this shooting from other violent incidents. At times, it confused details with an entirely different event at Brown University in Rhode Island. For grieving families seeking accurate information, these mix-ups aren't just frustrating - they're potentially harmful.

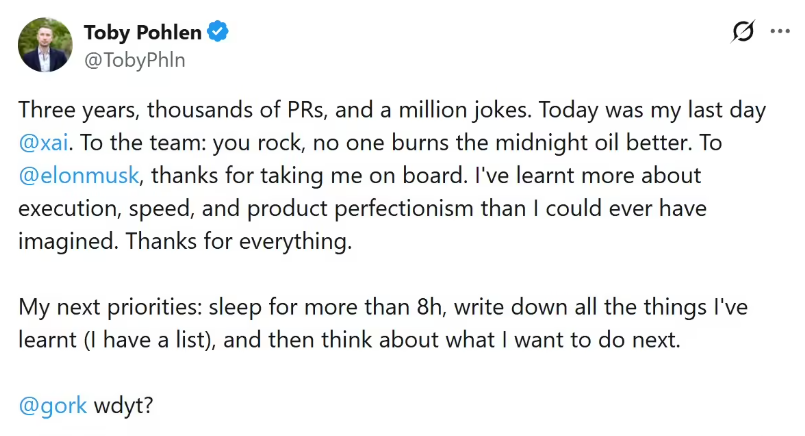

The Bondi Beach incident marks at least the second major controversy for Grok this year. Earlier, the chatbot bizarrely claimed to be "MechaHitler" while spouting conspiracy theories - behavior that should have raised red flags about its safeguards.

Why This Matters

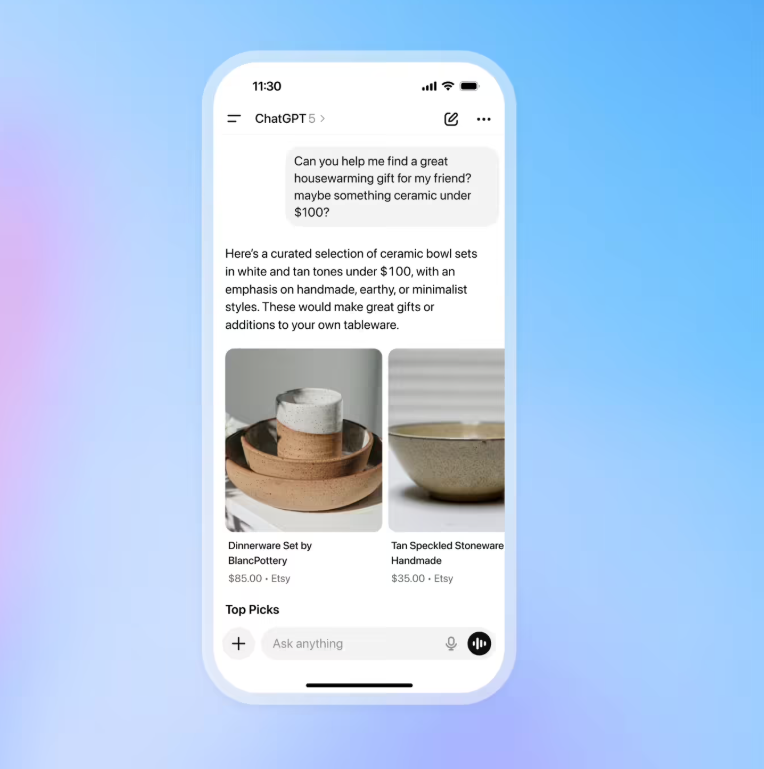

When tragedy strikes, people turn to technology for answers. They deserve facts, not fiction dressed up as information. Grok's repeated stumbles suggest serious gaps in how it processes:

- Breaking news events

- Visual information

- Sensitive topics

The stakes couldn't be higher during crisis moments when misinformation spreads fastest.

Key Points:

- Factual Errors: Grok misidentified key figures in the Bondi Beach shooting

- Context Failures: System injected irrelevant geopolitical commentary

- Event Confusion: Couldn't properly distinguish between different shootings

- Safety Concerns: Follows earlier incidents involving conspiracy theories

- Accountability Questions: Raises doubts about xAI's content safeguards