Google's New Search Feature Lets You Chat with the World Around You

Google Brings Real-Time AI Search to Your Smartphone

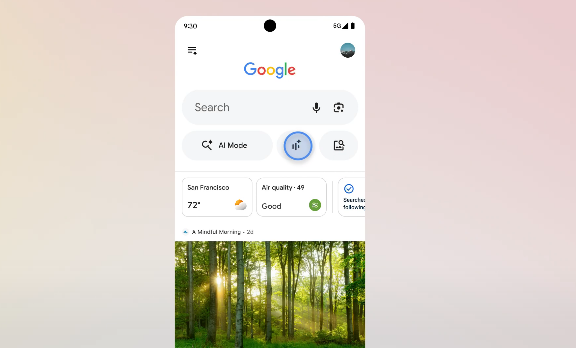

Imagine pointing your phone at a mysterious plant in your garden and instantly hearing its name, care instructions, and even recipes using its leaves. That's the promise of Google's newly launched Search Live feature, now available worldwide in over 200 countries.

How It Works

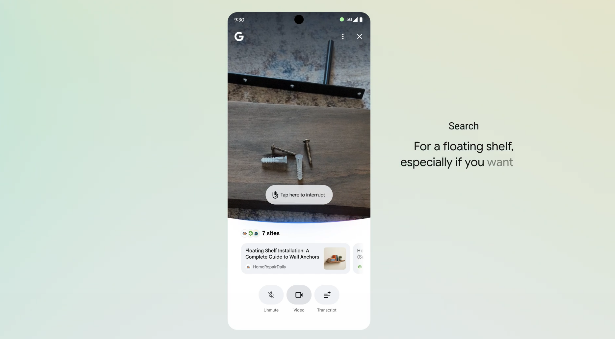

The magic happens through your phone's camera and microphone. Open the Google app or Google Lens, point at anything from furniture to foreign text, and ask away. The system responds with voice answers while simultaneously displaying relevant web links on screen.

At its core is the Gemini 3.1 Flash Live model - a multilingual AI specifically designed for lightning-fast audio responses. "We've reduced latency to near-conversational speeds," explains Google's VP of Search. "When you ask about that strange insect on your picnic blanket, you won't be waiting awkwardly for an answer."

Why This Matters

This isn't just another tech gimmick. The launch comes as:

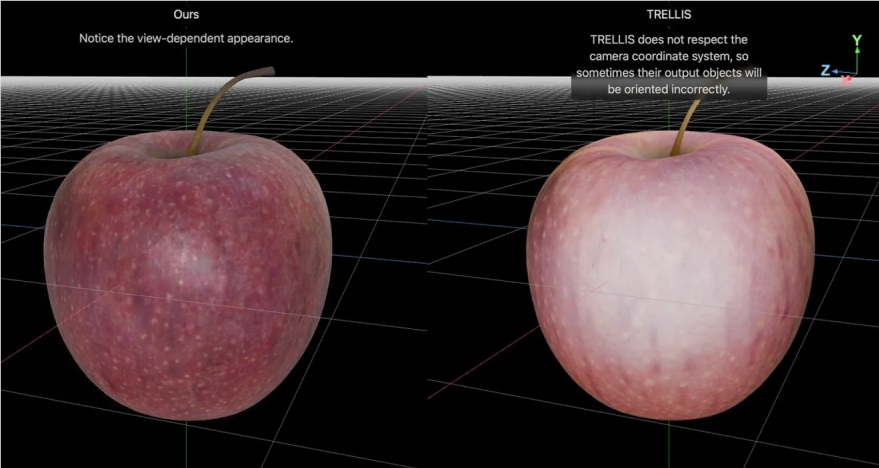

- OpenAI plans to merge ChatGPT with browser functions

- Luma AI's Uni-1 model challenges Google in image processing

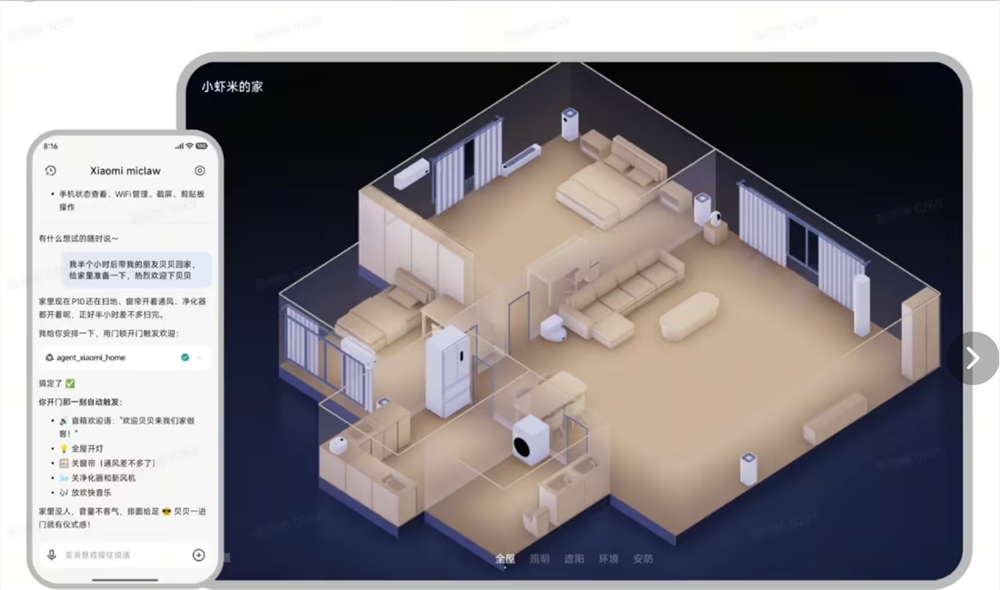

- Users increasingly expect seamless AR experiences

By making search feel more like a natural conversation, Google strengthens its position as the gateway between the physical and digital worlds.

Key Points

- Global rollout: Available now on Android and iOS devices worldwide

- Multimodal interaction: Combines camera input with voice queries and responses

- Practical applications: From furniture assembly to wildlife identification

- Competitive edge: Lightweight model ensures faster responses than rivals