Google's FACTS Benchmark Reveals AI Models Struggle with Accuracy

Google's New Benchmark Exposes AI Accuracy Limits

In a move that could reshape how we measure AI capabilities, Google's FACTS team has partnered with data science platform Kaggle to launch a comprehensive benchmark suite. This new tool aims to address a critical gap in AI evaluation: standardized testing for factual accuracy.

Image source note: The image is AI-generated, provided by the AI image generation service Midjourney

What FACTS Measures

The FACTS benchmark breaks down "factualness" into two practical scenarios:

- Contextual factualness: How well models generate accurate responses using provided data

- World knowledge factualness: Their ability to retrieve correct information from memory or web searches

The results so far? Even the most advanced models—including Gemini 3 Pro, GPT-5, and Claude 4.5 Opus—haven't cracked the 70% accuracy barrier.

Beyond Simple Q&A

Unlike traditional benchmarks, FACTS simulates real-world challenges developers face through four distinct tests:

- Parameter benchmark (internal knowledge)

- Search benchmark (tool usage)

- Multimodal benchmark (visual understanding)

- Context benchmark

Google has made 3,513 test examples publicly available while keeping some data private on Kaggle to prevent artificial score inflation.

Surprising Performance Gaps

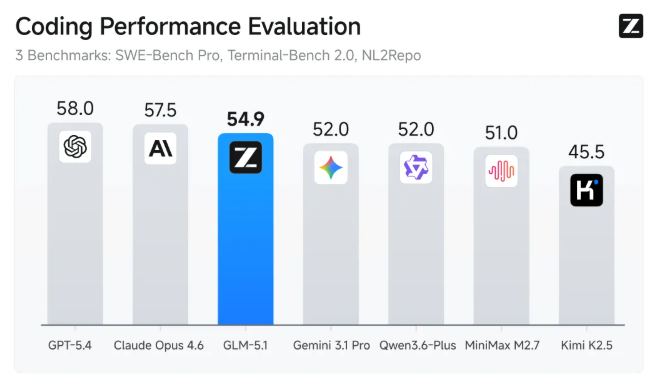

The preliminary rankings reveal interesting patterns:

- Gemini 3 Pro leads with 68.8% overall accuracy

- Followed by Gemini 2.5 Pro (62.1%) and GPT-5 (61.8%)

The standout? Gemini 3 Pro scored an impressive 83.8% on search tasks—but this dropped to just 76.4% when relying on internal parameters alone.

The takeaway? Companies building knowledge retrieval systems should consider combining models with search tools or vector databases for better results.

The most concerning finding involves multimodal tasks—even the best performer managed only 46.9% accuracy. "These numbers suggest we're still years away from reliable unsupervised data extraction," says one industry analyst who reviewed the findings. Companies using these models for product development should proceed with caution.

Key Points:

- 🔍 Accuracy ceiling: No model surpassed 70% overall accuracy

- 🏆 Top performer: Gemini 3 Pro leads but shows significant variation across test types

- ⚠️ Multimodal warning: Current visual understanding capabilities remain unreliable