DeepSeek-V4 Arrives: A Game-Changer in AI with Million-Word Memory

DeepSeek-V4 Breaks New Ground in AI Capabilities

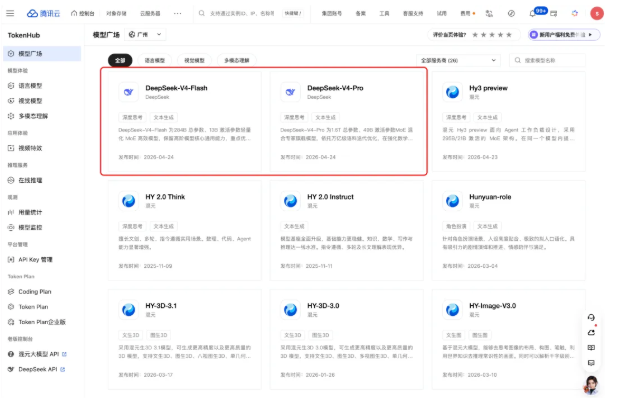

In a move that could democratize advanced AI, DeepSeek has launched the preview version of its V4 series models. The standout feature? A revolutionary ability to handle contexts up to one million words - roughly equivalent to seven full-length novels - while maintaining industry-leading performance.

Two Models, One Mission

The V4 lineup addresses different needs through two distinct versions:

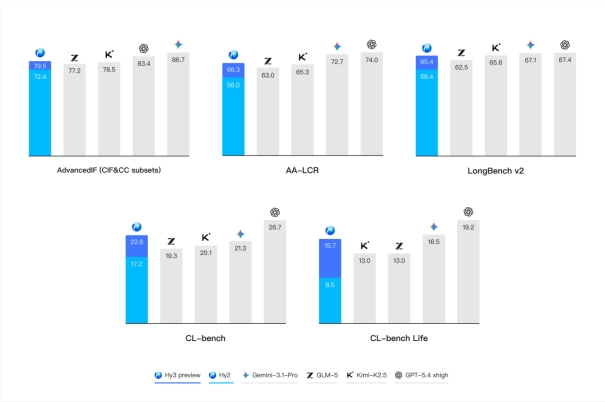

- DeepSeek-V4-Pro: This heavyweight (1.6T parameters) delivers performance rivaling top closed-source models. It particularly shines in technical domains, outperforming all open-source competitors in math, STEM, and coding evaluations.

- DeepSeek-V4-Flash: Don't let the smaller size (284B parameters) fool you. This efficiency-focused model matches its big brother on simpler tasks while offering faster, more economical API services.

The Secret Sauce: DSA Technology

The magic behind this leap forward lies in DeepSeek's proprietary DSA sparse attention mechanism. By compressing at the token level, the system dramatically cuts computational costs - solving what's been a major roadblock for widespread long-context adoption.

"This isn't just an incremental improvement," explains one industry analyst. "Making million-word context processing affordable could open doors we haven't even imagined yet."

Built for Real-World Use

Recognizing how professionals actually work with AI, DeepSeek has fine-tuned V4 for seamless Agent integration. Users can toggle between:

- Non-thinking mode for quick responses

- Thinking mode (with adjustable intensity) for complex problem-solving

The API even includes a reasoning_effort parameter - letting developers balance speed against depth of analysis depending on task requirements.

Open Access Philosophy

In keeping with its commitment to transparency, DeepSeek has made both models available through:

- Official website and app interfaces

- Open-source platforms like Hugging Face and Moba Community The company has also published detailed technical documentation for developers wanting to dig deeper into how it all works.

The sunsetting of older model names (deepseek-chat and deepseek-reasoner) signals a clean break as the company focuses on this new generation of technology.

Key Points:

- Million-word memory becomes practical through DSA innovation

- Pro version sets new benchmarks for open-source performance

- Flash version delivers remarkable efficiency without major sacrifices

- Agent optimization includes adjustable thinking modes

- Full open-source release promotes transparency and community development