China's Qwen3.6 AI Model Goes Open-Source, Packing Big Power in Small Package

A New Contender in Open-Source AI

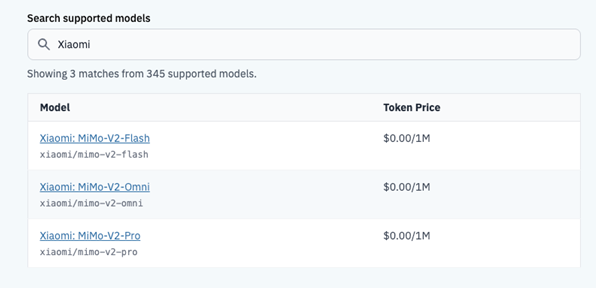

China's artificial intelligence landscape just got more interesting with the open-source release of Qwen3.6-35B-A3B on April 19th. This medium-sized model punches well above its weight class, combining impressive capabilities with remarkable efficiency.

Small Size, Big Brain

What makes this release special? The model uses only 3 billion of its 35 billion parameters at any given time through an advanced Mixture of Experts (MoE) system. Imagine having a team of specialists where you only pay for the ones you need - that's essentially how this architecture works. For developers, this translates to getting premium performance without the premium computing bill.

"The efficiency gains here are substantial," explains Dr. Li Wei, an AI researcher at Tsinghua University. "You're getting results comparable to much larger models while using far fewer resources."

Benchmark Buster

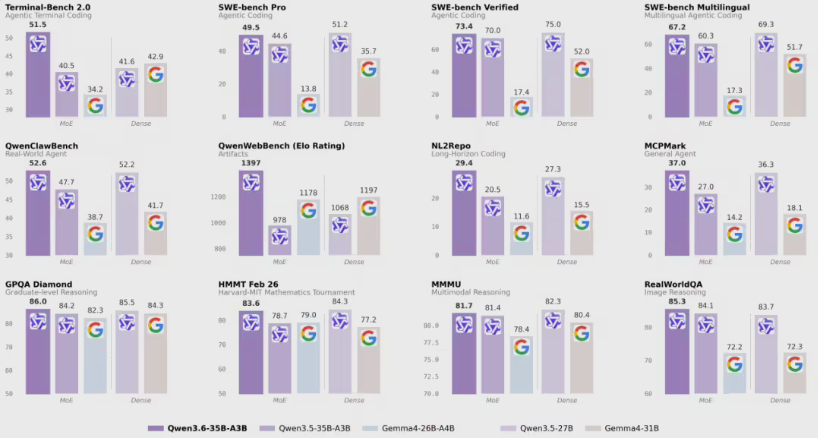

The numbers speak for themselves. In Terminal-Bench 2.0 (measuring programming skills) and real-world agent tests, Qwen3.6-35B-A3B doesn't just beat its predecessors - it challenges models twice its size. Its secret weapon? A "multimodal thinking" mode that processes visual information with human-like spatial understanding.

Want proof? Check its scores on RefCOCO, a tough image recognition challenge where it demonstrates scary-good comprehension of physical objects and their relationships.

Built for Real Work

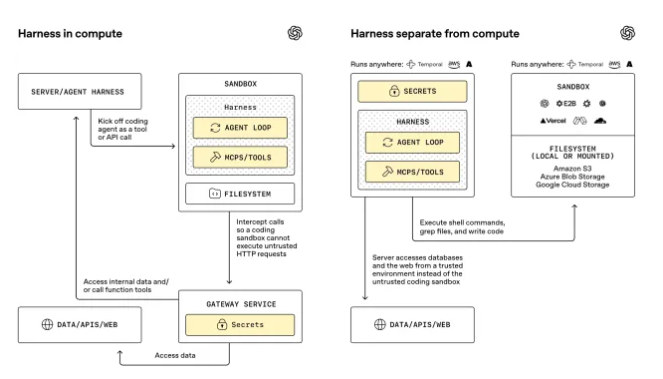

This isn't just academic bragging rights. The developers have ensured deep compatibility with popular frameworks like OpenClaw and Claude Code. Translation: businesses can plug this into their existing systems relatively painlessly.

"We're seeing strong interest from manufacturing and logistics companies," notes Chen Yuan from Alibaba's DAMO Academy (the team behind Qwen). "The combination of programming smarts and visual understanding makes it ideal for automating complex workflows."

Get Your Hands Dirty

The best part? You don't need special access or deep pockets to try it out. The model is available now through:

- Mogu Community

- Hugging Face

- Qwen Studio

For developers tired of choosing between performance and practicality, this might be the Goldilocks solution they've been waiting for.

Key Points:

- Efficient architecture activates only 3B of 35B parameters at once

- Outperforms larger models in programming and real-world agent tests

- Multimodal capabilities handle both text and visual information

- Ready for business with framework compatibility

- Available now through major open-source platforms