ByteDance's Seeduplex Lets AI Listen and Talk Like Humans

ByteDance Breaks New Ground with Human-Like Voice AI

Imagine trying to chat with someone who only listens in silence, then replies only after you've completely stopped talking. That's how most voice assistants operate today. ByteDance's Seed team is changing this with Seeduplex, launched April 9, which brings true conversational flow to AI interactions.

The End of Robotic Turn-Taking

Seeduplex represents a fundamental shift from the 'walkie-talkie' style of current voice assistants. Traditional systems use half-duplex technology - they either listen or speak, never both. This creates those awkward pauses we've all experienced.

"We wanted to recreate the natural rhythm of human conversation," explains a ByteDance engineer familiar with the project. "When people talk, we process speech continuously, catching nuances even while formulating responses."

The model achieves this through a novel synchronous processing framework that:

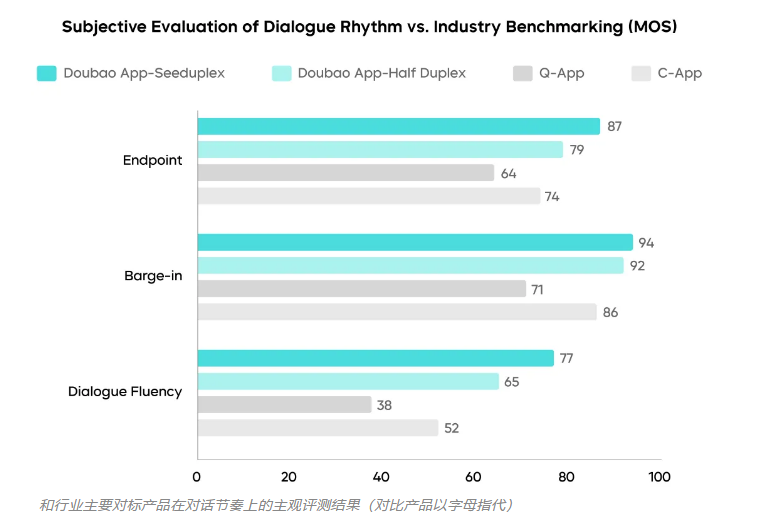

- Reduces misresponses by 50% compared to current solutions

- Handles overlapping speech in group settings

- Filters out background noise like traffic or TV audio

Smarter Than Your Average Assistant

What makes Seeduplex stand out isn't just its technical specs, but how it understands conversational context:

Dynamic stopping technology cuts response delays by 250 milliseconds - about the blink of an eye - while slashing accidental interruptions by 40%. The AI can now distinguish between thoughtful pauses and when you've actually finished speaking.

During testing, the team noticed something interesting: users unconsciously adapted their speech patterns as interactions felt more natural. "People started interrupting the AI less because they felt heard," the engineer noted.

Behind the scenes, optimizations like speculative sampling ensure the system remains responsive even during peak usage on Douyin, where it's already deployed to millions of users.

The Future of AI Companions

Seeduplex isn't just about better voice tech - it's a stepping stone toward truly intelligent assistants. ByteDance hints at combining this with visual recognition soon, creating AI that doesn't just hear but sees and understands context like humans do.

Could this be the beginning of the multimodal assistants we've seen in sci-fi? The team's ambitions suggest so: "We're moving toward systems that listen, watch, think and respond appropriately - that's when AI stops feeling like a tool and starts feeling like a partner."

Key Points:

- Seeduplex processes speech simultaneously like humans, eliminating awkward pauses

- Already live on Douyin, handling millions of conversations daily

- 50% fewer errors than current voice assistants

- 250ms faster response times with better pause detection

- Paves way for AI that combines voice, vision and contextual understanding

Project page: https://seed.bytedance.com/seeduplex