Beijing Unveils Game-Changing XR-1 Robot Brain That Learns Like Humans

China's Robotics Leap: XR-1 Blurs the Line Between Code and Motion

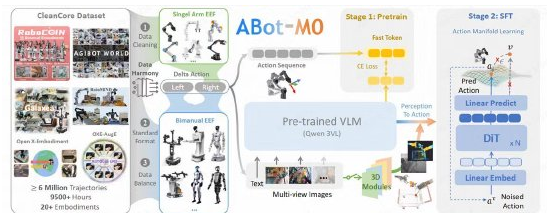

In a quiet laboratory northwest of Beijing, engineers have taught robots a new language - not of words, but of movement. The recently unveiled XR-1 system represents China's most advanced attempt yet to create machines that don't just compute, but physically interact with the world like humans do.

The Robot Cerebellum Comes Alive

Imagine handing a coffee cup to a colleague - your brain effortlessly coordinates vision, grip strength, and arm trajectory. XR-1 replicates this biological magic through what developers call "embodied intelligence." Unlike conventional AI that exists purely in digital space, this system connects cognitive processing directly to mechanical action.

The secret sauce? Two massive training libraries:

- RoboMIND2.0: Over one million data points teaching object manipulation under real-world variables like changing lighting or cluttered environments

- ArtVIP: A treasure trove of high-fidelity digital objects that help robots recognize everything from kitchen utensils to industrial tools

"We're not just building better robots," explains lead engineer Dr. Wei Lin. "We're creating universal translators that let any robotic body understand instructions from any AI brain."

From Lab Bench to Factory Floor

What sets XR-1 apart is its chameleon-like adaptability. During demonstrations:

- A research prototype delicately arranged fragile lab glassware using Franka arms

- An industrial model simultaneously operated three UR robotic arms on an assembly line The system achieved both feats without hardware-specific reprogramming - a first for Chinese robotics.

The innovation center has strategically positioned XR-1 as the "cerebellum" in their three-part ecosystem:

- Body: Their "Embodied Tiantang" physical platforms provide the mechanical muscles

- Brain: Previously released WoW and Pelican-VL models handle complex reasoning

- Cerebellum: XR-1 translates thoughts into precise motions

Opening Pandora's Toolbox

By open-sourcing this technology, Beijing aims to accelerate global robotics development while establishing China's leadership in embodied AI standards. Early adopters range from automotive manufacturers redesigning production lines to healthcare startups developing assistive devices.

The implications extend beyond industry. During testing phases, XR-1-powered robots:

- Learned new manual tasks 60% faster than previous systems

- Maintained precision even when objects were moved or lighting changed unexpectedly

- Demonstrated unprecedented dual-arm coordination for tasks like opening containers

As robotics researcher Elena Petrov notes: "This isn't incremental improvement - it's redefining how machines learn physical skills."

Key Points:

- 🧠 XR-1 serves as robotic "cerebellum," converting AI decisions into precise movements

- 🔄 Breakthrough cross-platform compatibility works across major robot brands

- 📚 Comes with RoboMIND2.0 (1M+ data points) and ArtVIP digital asset library The open-source release invites global collaboration on embodied intelligence standards