Alibaba Unveils Lightweight Qwen3-VL Models with Near-Flagship Performance

Alibaba's New Lightweight AI Models Challenge Larger Predecessors

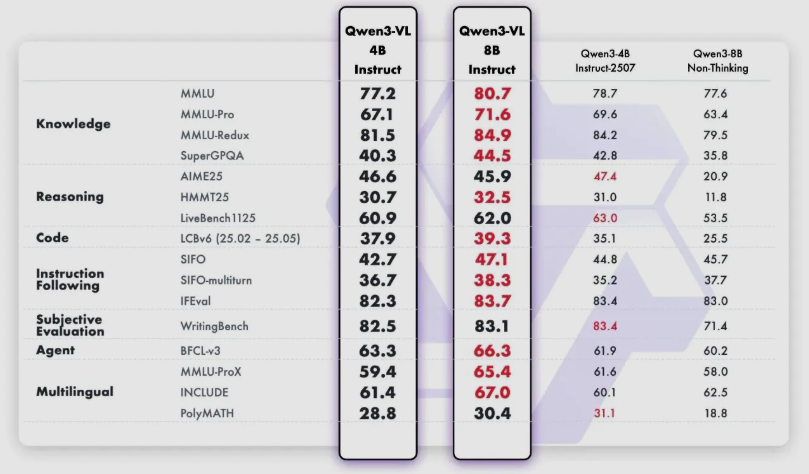

Alibaba Group's Qwen team has introduced two compact yet powerful additions to its Qwen3-VL visual language model series - the 4B and 8B parameter versions. These new models demonstrate that smaller doesn't necessarily mean weaker, with performance metrics that rival much larger AI systems.

Breaking Down the New Offerings

The newly released models come in:

- 4 billion parameter versions (Instruct and Thinking variants)

- 8 billion parameter versions (Instruct and Thinking variants)

This strategic release provides developers with flexible deployment options while maintaining the full capabilities of the original Qwen3-VL series. The 'Instruct' variants specialize in following complex instructions, while the 'Thinking' versions excel at chain-of-thought reasoning tasks.

Technical Advancements

The development team achieved three critical breakthroughs:

- Reduced hardware requirements: Memory usage drops significantly, enabling deployment on consumer-grade devices

- Capability retention: All core functions including multimodal understanding and complex reasoning remain intact

- Performance optimization: Benchmark results show competitive edge against similar-sized competitors

Performance That Surprises

In rigorous testing, these lightweight models have:

- Outperformed comparable offerings from Google (Gemini2.5Flash Lite) and OpenAI (GPT-5Nano)

- Demonstrated particular strength in STEM Q&A, visual question answering (VQA), and OCR tasks

- In some scenarios, approached the performance of Alibaba's own 72B parameter flagship model released six months prior

The implications are significant for enterprises needing local deployment or managing inference costs.

The Miniaturization Trend Continues

This release represents another milestone in the industry-wide push toward:

- More efficient model architectures

- Lower computational costs without sacrificing capability

- Expanded applications in mobile and IoT environments

The technical paper suggests sophisticated compression techniques enabled this balance between size and performance.

The models are now available on Hugging Face: Qwen3-VL Collection

Key Points:

- Alibaba releases compact 4B/8B versions of its Qwen3-VL visual language model

- Maintains strong performance despite significantly reduced size

- Outperforms similar-sized competitors from major tech firms

- Enables broader deployment on resource-limited devices

- Represents ongoing industry trend toward efficient AI architectures