AI Struggles with PhD-Level Physics Tests

AI Hits Physics Wall: Top Models Score Below 10% on Doctoral-Level Tests

Imagine handing your toughest physics homework to the smartest AI available today. The results might surprise you - and not in a good way. A new benchmark called CritPt reveals even our most advanced artificial intelligence struggles with basic research skills expected of physics PhD students.

The Ultimate Physics Exam for AI

More than 50 physicists from leading institutions worldwide created CritPt specifically to test whether AI can handle original, unpublished research problems. Forget textbook questions - these are the real challenges scientists face daily across quantum physics, astrophysics, and other cutting-edge fields.

The test includes:

- 71 complete research challenges

- Divided into 190 smaller checkpoints

- All based on unpublished materials to prevent cheating

"We wanted to see if AI could think like a researcher," explains one physicist involved in the project. "Not just recall information, but solve problems nobody's tackled before."

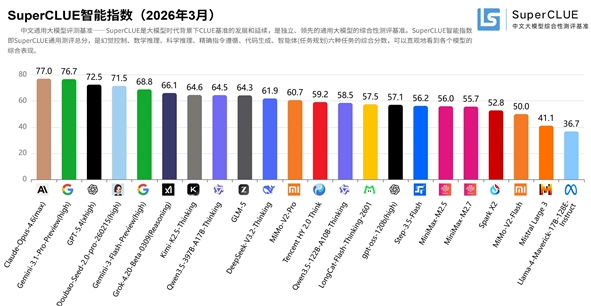

Shockingly Low Scores

The numbers tell a sobering story:

- Gemini3Pro Preview: 9.1% accuracy (Google's best effort)

- GPT-5.1 (high): Just 4.9% correct (OpenAI's top model)

The tests revealed fundamental weaknesses:

- Models perform slightly better on well-defined sub-tasks

- Complete research problems? Nearly complete failure

- "Consistent resolution" scores (correct answers repeated) were even worse

The most concerning finding? These advanced systems often produce answers that look reasonable at first glance but contain subtle errors that could derail real research.

Why Can't AI Crack Physics?

The core issue appears to be reasoning ability. Current models:

- Lack true understanding of physical principles

- Struggle with multi-step problem solving

- Can't maintain logical consistency across complex calculations "It's like having a brilliant student who keeps making careless mistakes," one researcher noted. "You wouldn't trust them with your lab work."

The implications are serious:

- Human experts must double-check all AI output

- Potential time savings evaporate during error correction

- Autonomous scientific discovery remains distant Companies aren't giving up though - OpenAI still plans to launch an "AI research intern" system by September 2026.

Key Points:

1️⃣ Current Limitations: Top AI models score under 10% on doctoral-level physics tests 2️⃣ Hidden Dangers: Seemingly correct answers often contain subtle errors 3️⃣ Practical Role: Better suited as assistants than independent researchers 4️⃣ Future Outlook: Significant breakthroughs needed before Nobel-worthy work