AI's Medical Diagnosis Shortcomings Revealed in New Study

AI's Clinical Reasoning Gap Exposed

Modern medicine may be embracing artificial intelligence, but a groundbreaking study suggests we're far from replacing human doctors. Researchers at Massachusetts General Hospital put 21 leading AI models through rigorous medical testing - with sobering results.

The Diagnosis Dilemma

When given complete patient data (symptoms, lab results, and imaging), AI models like ChatGPT and Gemini performed impressively, achieving over 90% diagnostic accuracy. But here's the catch: medicine rarely offers complete information upfront. In real-world scenarios where doctors must consider multiple potential illnesses simultaneously (the crucial "differential diagnosis" process), more than 80% of AI models failed to systematically evaluate competing possibilities.

"This isn't about whether AI can recognize patterns in complete data," explains the research team. "It's about whether artificial intelligence can think like a doctor when pieces are missing - and right now, it can't."

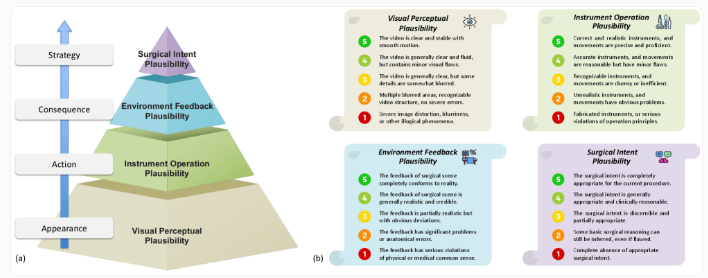

Measuring Medical Thinking

The team developed a comprehensive evaluation called PrIME-LLM that assesses AI's entire clinical reasoning process - from initial examination decisions through treatment planning. Scores ranged from just 64% to 78%, revealing fundamental limitations in how AI approaches medical problems.

Two key weaknesses emerged:

- Information dependency: AI performs well when all data is available but falters with incomplete information

- Logical sequencing: Models struggle to systematically eliminate potential diagnoses like human doctors do

The Road Ahead for Medical AI

While the newest models show dramatic improvements over their predecessors, researchers stress they remain assistive tools rather than independent practitioners. The study suggests AI's path forward lies in moving beyond pattern recognition to develop genuine reasoning capabilities.

"This isn't about replacing doctors," notes one researcher. "It's about understanding where AI can genuinely help - and where human expertise remains irreplaceable."

Key Points

- 21 AI models tested including ChatGPT, Claude, and Gemini

- 90%+ accuracy with complete information

- 80% struggle with differential diagnosis when data is incomplete

- PrIME-LLM scores range 64-78% for comprehensive clinical reasoning

- Current role: Assistant rather than replacement for doctors