Your Phone Just Got Smarter: Gemini AI Now Handles Tasks Like a Personal Assistant

Your Smartphone Just Learned New Tricks

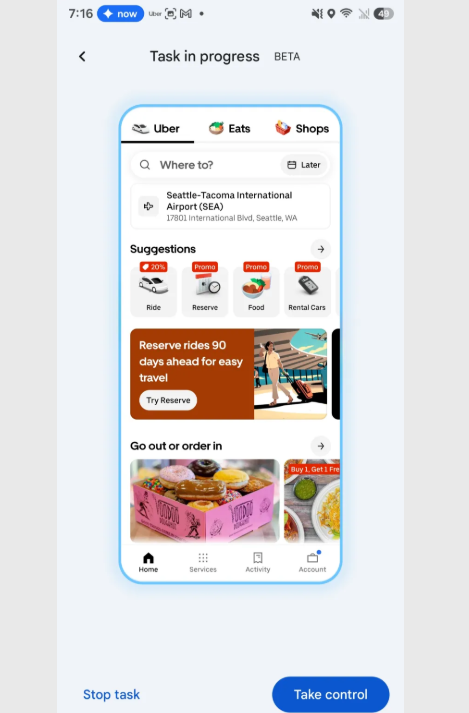

Imagine telling your phone "Order my usual coffee" and watching it navigate the Starbucks app just like you would - scrolling through menus, selecting your favorite drink, even stopping for your final approval before paying. This isn't science fiction anymore. Google's Gemini-powered task automation has entered beta testing, marking a fundamental shift in how we interact with our devices.

Beyond Voice Commands: AI That Actually Does the Work

The key difference? Traditional assistants retrieve information; Gemini performs actions. Instead of simply telling you there's an Uber available to the airport, it:

- Opens the Uber app automatically

- Identifies the correct terminal (asking if there's ambiguity)

- Prepares everything up to the final "Confirm" button

"It's eerie at first," admits early tester Mark Chen. "You give an instruction and suddenly see your phone operating itself - tapping, scrolling - but always stopping exactly where I'd normally double-check."

Safety First: Human Oversight Built In

Google has implemented multiple safeguards:

- Real-time visual feedback: Every action appears in a virtual window so users can monitor progress.

- Mandatory confirmation stops: No payment or order completes without explicit user approval.

- Instant interruption: A prominent pause button appears throughout each automated sequence.

The system currently specializes in delivery and transportation apps where procedures are relatively standardized. Complex tasks involving subjective decisions (like choosing between visually similar menu items) still require human judgment.

Why This Changes Everything

Previous automation required deep integration with each app's API - a slow process requiring developer cooperation. Gemini's breakthrough lies in interacting with interfaces directly like humans do:

- Scrolling through lists

- Identifying buttons by their visual properties

- Navigating multi-step flows

This universal approach means potentially thousands of apps could become automatable without needing special updates.

The technology isn't perfect yet - testers report occasional hesitation when encountering unfamiliar app layouts or ambiguous options. But as algorithms improve, we're moving toward phones that don't just respond to commands but reliably execute entire workflows from start to near-finish.

Key Points:

- Gemini AI can now perform multi-app tasks like ride-hailing and food ordering autonomously

- Every action requires human approval before finalizing transactions

- Works by mimicking screen interactions rather than requiring special API access

- Currently limited to standardized processes in transportation/delivery apps

- Represents major shift from information retrieval to task execution