Why You Should Think Twice Before Uploading Medical Images to AI Chatbots

In an age where artificial intelligence is rapidly integrating into everyday life, more people are turning to AI chatbots like ChatGPT, Google Gemini, and Grok to help interpret medical data. Some individuals are even uploading sensitive medical images such as X-rays, MRIs, and PET scans to these platforms, seeking guidance on health matters. While this may seem like a convenient solution, experts warn that this practice can expose users to significant privacy and security risks.

Data Training Risks

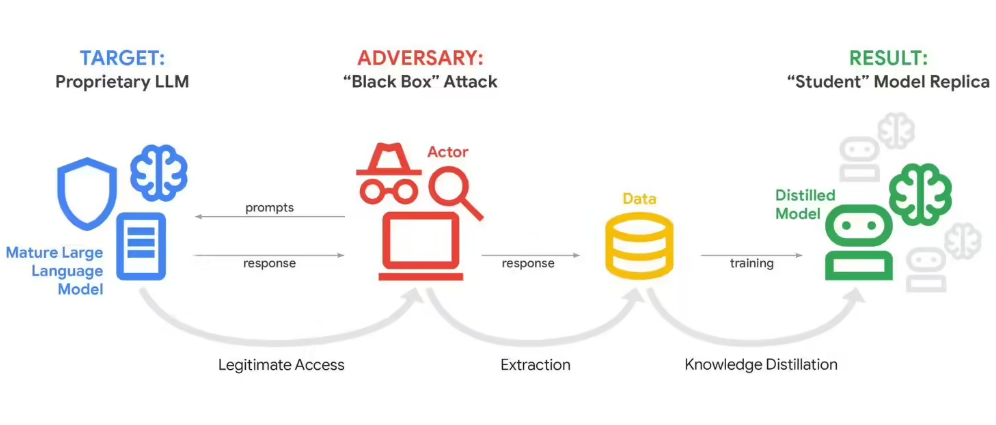

Generative AI models, like the ones used by popular platforms, often rely on the data they receive to improve their algorithms and enhance the accuracy of their outputs. However, there is limited transparency about how this data is used, whether it is stored, and to what extent it might be shared with other parties. For example, uploaded medical images could potentially be used to train the AI without the user's full consent or understanding of the scope of data utilization. This lack of clarity raises concerns about privacy and the ethical handling of sensitive medical information.

Privacy Breach Concerns

In addition to the potential training risks, there is the issue of privacy. Medical data is typically protected under strict laws such as the U.S. Health Insurance Portability and Accountability Act (HIPAA), which ensures that personal health information is not disclosed without consent. However, most AI platforms are not subject to HIPAA regulations, meaning user-uploaded medical data may not be fully protected. In some cases, individuals have discovered that their private medical information was included in datasets used to train AI models, making it accessible to organizations like healthcare providers, employers, or even government agencies.

This breach of privacy is especially concerning when considering that many popular AI platforms do not have robust safeguards in place. Users may unknowingly expose themselves to risk by uploading sensitive data, which could lead to unwanted sharing of their personal medical information.

Lack of Policy Transparency

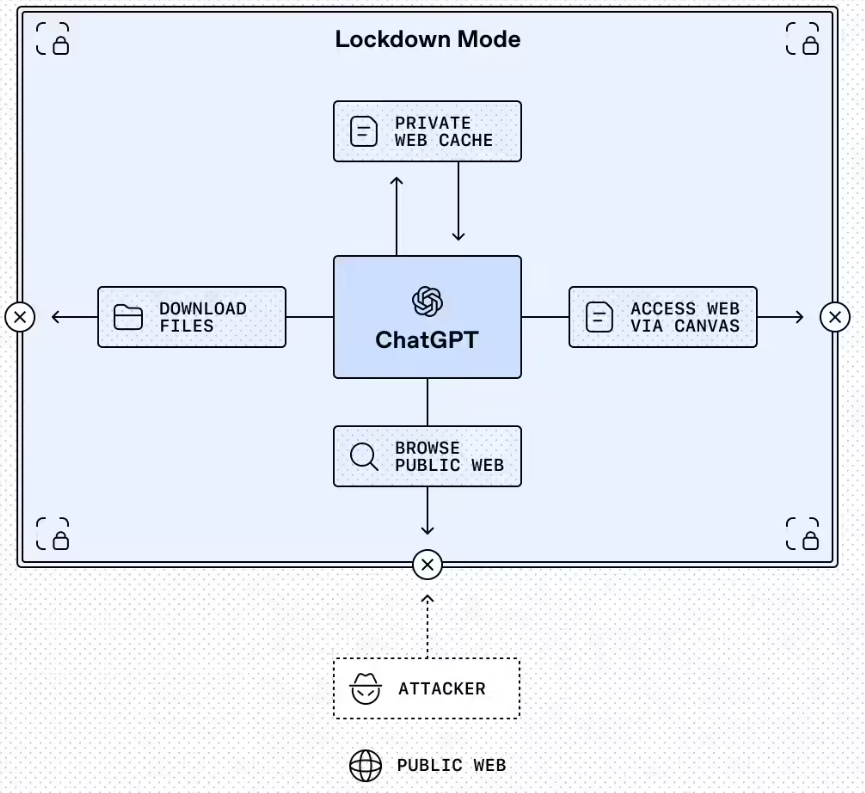

Take, for instance, the X platform, where users have been encouraged to upload their medical images to Grok, an AI assistant, in order to improve the chatbot’s interpretive capabilities. Despite this promotion, X’s privacy policy reveals that it shares user information with an unspecified number of “related” companies. The absence of clear, transparent data-sharing policies makes it difficult for users to understand the full extent of how their information is being used and with whom it might be shared.

This lack of transparency in how user data is handled is a significant red flag for anyone considering uploading their private medical information to an AI platform. The potential for data to be misused or exposed is a serious concern that should not be taken lightly.

Expert Advice: Think Before You Upload

Experts advise that information on the internet, once uploaded, rarely disappears. As a result, users are urged to think carefully before uploading private medical data to AI platforms. While the convenience of AI technology is undeniable, it is important to prioritize the security and privacy of personal medical information.

Instead of relying on AI platforms, users are encouraged to use formal medical channels that are protected under HIPAA regulations, ensuring that their data is handled appropriately. Additionally, it is critical to read the privacy policies of AI platforms carefully, avoid uploading sensitive medical images, and stay updated on changes to data usage policies.

How to Protect Your Privacy

To protect their privacy and minimize risks, users should:

- Use formal medical channels that are protected by HIPAA.

- Thoroughly review the privacy policies of any AI platform before using it.

- Avoid uploading sensitive medical images or personal health information to platforms that are not secure.

- Stay informed about changes to the data usage policies of the platforms they engage with. By taking these precautions, individuals can safeguard their personal health data and make more informed decisions about when and how to use AI technologies.

Key Points

- Uploading medical images to AI chatbots can expose users to privacy and security risks.

- AI platforms may use personal medical data for training, with little transparency about how it is used.

- Many AI platforms are not subject to HIPAA regulations, leaving user data vulnerable.

- Users should prioritize HIPAA-protected medical channels and carefully read privacy policies before using AI platforms.

- Staying informed about data usage policies can help mitigate potential risks associated with AI platforms.