Kuaishou's New AI Model Sees and Thinks Like Never Before

Kuaishou Breaks New Ground With Multimodal AI

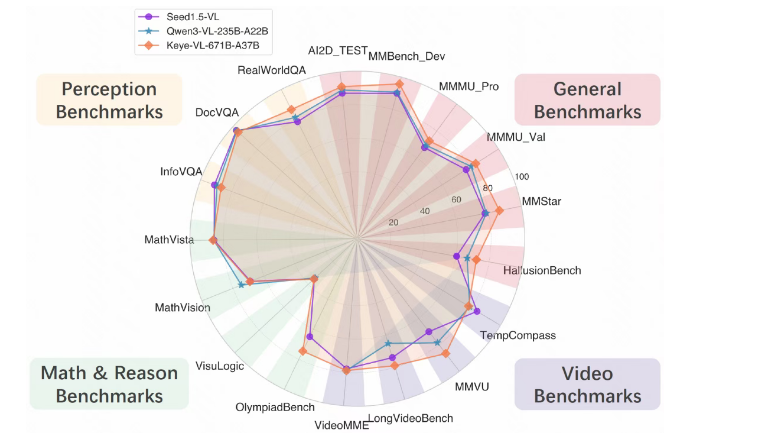

Imagine an artificial intelligence that doesn't just recognize objects in photos but actually understands their context and relationships. That's precisely what Kuaishou has achieved with its newly launched Keye-VL-671B-A37B model, representing one of the most significant advances in multimodal AI technology this year.

Seeing Beyond Pixels

The Beijing-based tech company describes its creation as "good at seeing and thinking" - a modest description for technology that outperforms previous models across multiple benchmarks. Where earlier systems might identify a cat playing with yarn, this new model could infer the playful mood or even predict the next movement.

"We're moving beyond simple recognition," explains Dr. Li Wei, Kuaishou's lead researcher on the project. "Our model doesn't just see images - it comprehends them in ways that allow for meaningful interaction and problem-solving."

Technical Breakthroughs Under the Hood

The magic happens through an innovative architecture combining:

- DeepSeek-V3-Terminus language model foundation

- KeyeViT visual processing system

- Sophisticated MLP connection layers

The training regimen reads like an elite athlete's preparation schedule:

- Initial alignment with frozen parameters

- Full pre-training across all systems

- Precision annealing with ultra-high-quality data

The team fed the system 300 billion carefully curated data points - equivalent to showing someone every photo uploaded to Instagram for nearly three years straight.

Practical Applications Coming Soon

The implications stretch far beyond technical benchmarks:

- Education: Imagine math tutors that can explain problems by drawing diagrams in real-time

- Accessibility: Systems that don't just describe images but interpret their emotional content

- Business Intelligence: Automated analysis of complex charts and financial reports

The roadmap gets even more ambitious, with plans to develop "multimodal Agent capabilities" - essentially creating AI assistants that can use digital tools autonomously while reasoning through visual information.

What This Means For AI's Future

While competitors focus on making their models bigger, Kuaishou appears committed to making theirs smarter. The emphasis on reasoning over raw processing power suggests a shift toward more human-like artificial intelligence - systems that don't just calculate but comprehend.

The open-sourcing of this technology could accelerate innovation across the entire field, though some experts caution about potential misuse risks as these capabilities become more widely available.

Key Points:

- Visual Comprehension: Goes beyond recognition to true understanding of images/videos

- Training Innovation: Three-stage process yields unprecedented reasoning abilities

- Practical Focus: Designed for real-world applications from education to business

- Open Approach: Released code could spur broader industry advancements