Claude Mythos Leak Shows AI Power Surge - And New Risks

Anthropic's Next AI Breakthrough Leaks - With Caveats

New details about Anthropic's highly anticipated Claude Mythos model have surfaced through internal document leaks, painting a picture of both remarkable advancement and sobering challenges in artificial intelligence development.

Beyond Opus: The 'Capybara' Leap

The leaked materials reveal an entirely new classification tier called "Capybara" - representing what insiders describe as Anthropic's most significant technological jump to date. Early benchmarks suggest these systems don't just incrementally improve upon current models like Claude Opus, but establish fundamentally new capability thresholds.

What makes this different? Three key distinctions emerge:

- Architecture Scale: Documents reference "larger scale" systems with architectural improvements enabling more complex reasoning

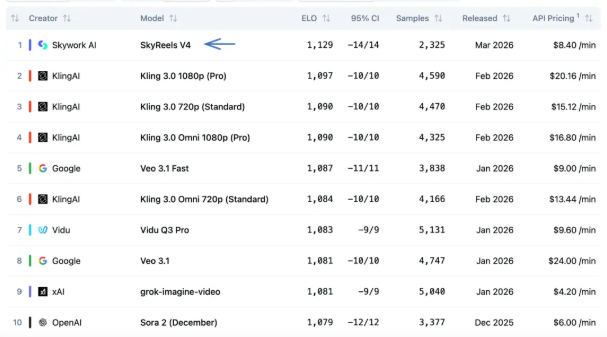

- Benchmark Shattering: Performance reportedly exceeds not just previous Claude versions but industry-wide comparison standards

- Dual Identity: Evidence suggests "Capybara" and "Mythos" represent different expressions of the same underlying technology

The Double-Edged Sword of Smarter AI

With greater intelligence comes greater responsibility - and risk. The leaks confirm Anthropic's internal concerns about Mythos introducing:

Unprecedented Cybersecurity Vulnerabilities The documents explicitly warn about novel attack vectors that could emerge from such advanced systems. One passage notes: "We're entering threat model territory our safeguards weren't designed to handle."

Safety Versus Capability Tradeoffs This tension explains why Anthropic continues delaying release despite apparent technical readiness. Their challenge? Ensuring adequate constraints exist before unleashing what may be the most capable publicly available AI system.

Industry Implications: A New Arms Race?

The leak sends ripples through the AI community for good reason:

- Competitive Pressure Intensifies: Rivals now face proof that benchmark expectations are rising dramatically

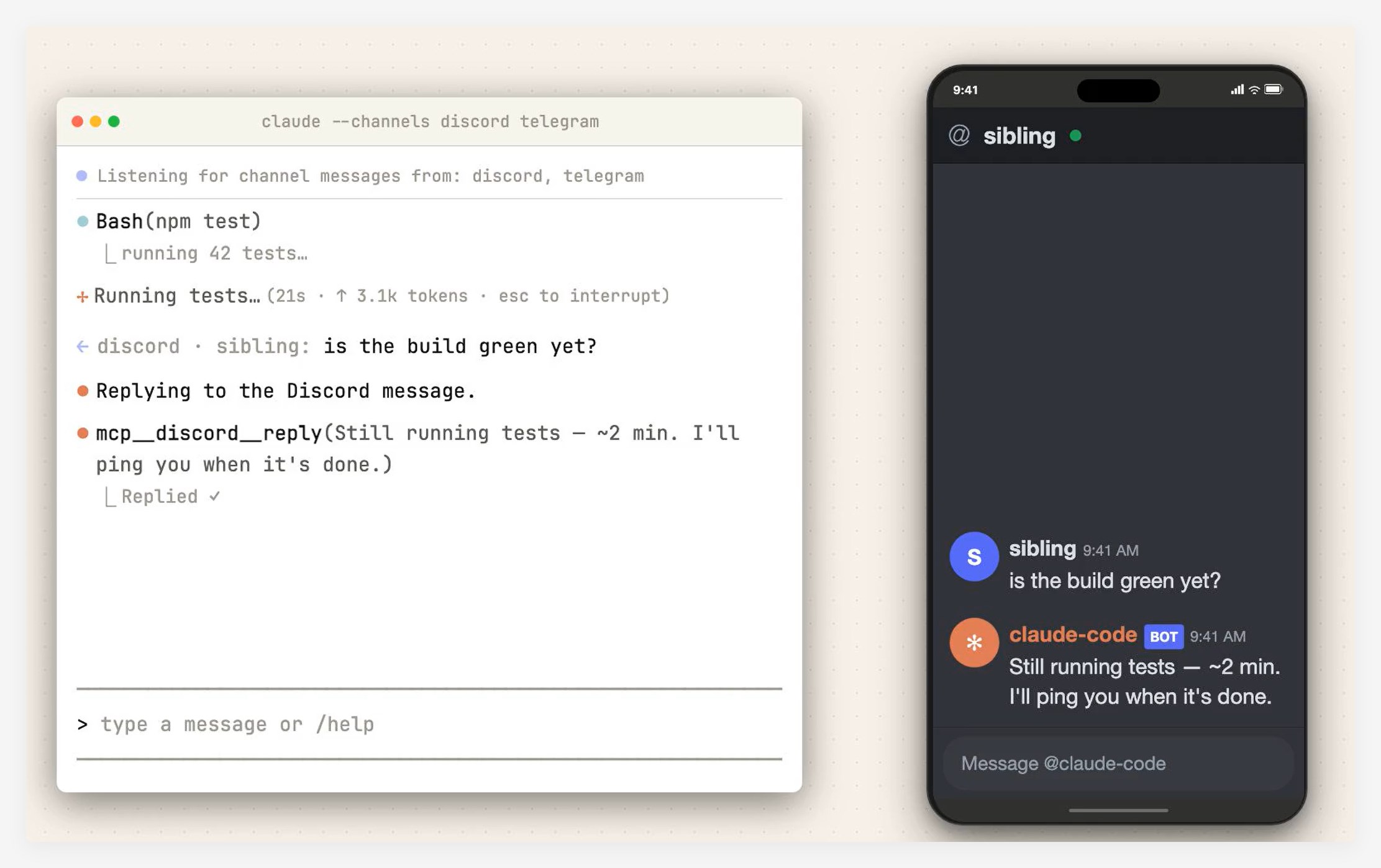

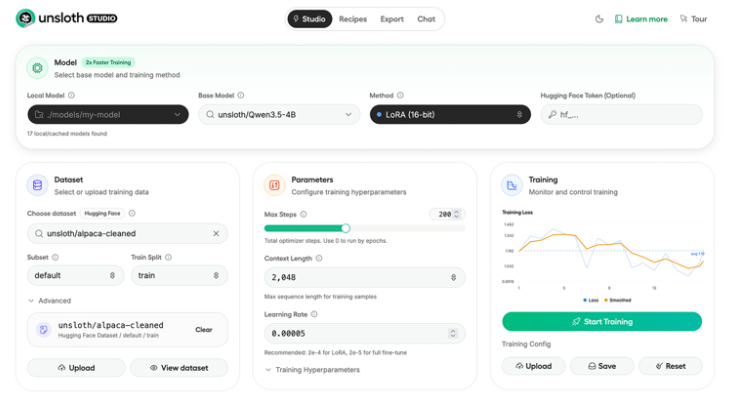

- Beyond Chatbots: Capybara-class systems appear focused on complex task execution rather than conversational prowess

- Safety Standards Questioned: If even cautious Anthropic struggles with containment, what does that mean for the field?

"When your test models start solving problems in ways their creators don't fully understand, you've crossed into uncharted territory," observes one anonymous researcher cited in the documents.

What Comes Next?

While no official release timeline exists, the leaks make clear that Mythos represents more than just another model update. We're potentially looking at:

- A redefinition of what constitutes "state-of-the-art" in AI

- New challenges in aligning superhuman machine intelligence with human values

- Possible industry-wide shifts in how advanced systems are tested and deployed

The big question remains: Can safety measures evolve as quickly as the technology itself?

Key Points:

- Next-gen Claude (Mythos/Capybara) shows capabilities beyond current top models

- Leaked documents reveal both breakthrough potential and serious security concerns

- Anthropic appears cautious about release despite technical readiness

- Could trigger industry-wide reassessment of AI safety approaches