Adobe and MIT Unveil CausVid, a Revolutionary Real-Time Video Generation Model

Adobe and MIT Unveil CausVid, a Revolutionary Real-Time Video Generation Model

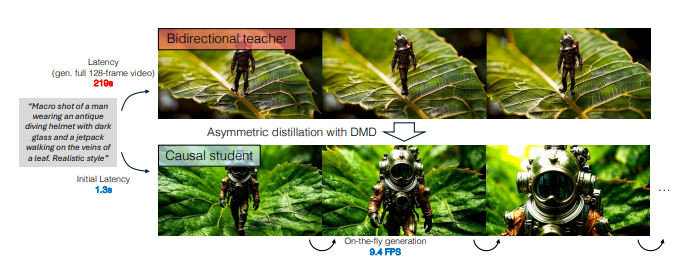

Adobe and MIT have partnered to launch CausVid, a state-of-the-art video generation model that dramatically improves the speed and efficiency of video creation. With a first-frame delay of just 1.3 seconds and a generation speed of 9.4 frames per second, CausVid represents a significant leap forward in the field of real-time video generation.

Overcoming Traditional Limitations in Video Generation

Traditional video generation models often suffer from slow speeds. These models analyze the entire video sequence before rendering each frame, leading to long delays that can take minutes or even hours to complete. This is especially problematic for industries requiring quick feedback and real-time interaction, such as gaming and virtual reality.

However, CausVid offers a revolutionary solution by leveraging a novel causal generation method. Instead of processing the entire sequence, CausVid predicts the next frame by analyzing only the frames already generated. This approach reduces computational overhead and enables video generation at a much faster rate.

The Science Behind CausVid's Lightning Speed

So, how did CausVid achieve this breakthrough? The answer lies in asymmetric distillation technology. Researchers first trained a bidirectional diffusion model capable of generating high-quality videos but at slower speeds. They then transferred the knowledge from this model to CausVid, allowing it to predict the next frame with remarkable speed.

Additionally, techniques such as ODE initialization and KV caching were implemented to further optimize the model’s performance during both training and inference. These innovations ensure that CausVid not only runs faster but also maintains stability during operation.

A Powerful and Versatile Tool for Video Generation

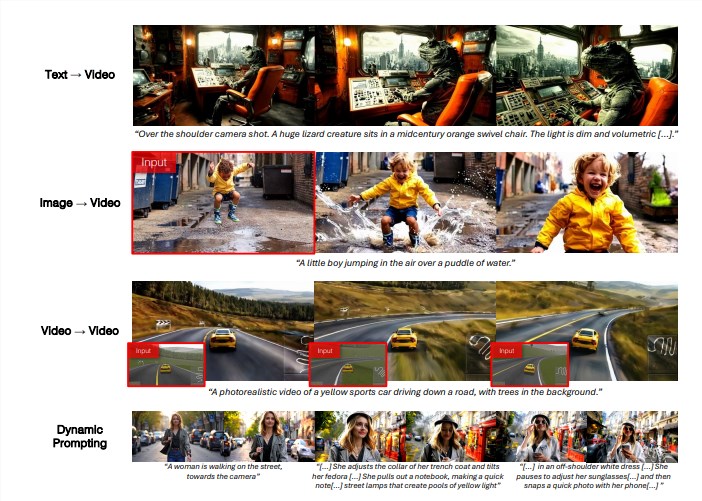

CausVid is not only fast but also incredibly versatile. It supports a variety of video generation tasks, including text-to-video, image-to-video, video-to-video conversion, and dynamic prompts. Each of these tasks can be completed with extremely low latency, offering tremendous potential for real-time applications.

The model’s ability to generate videos quickly and efficiently opens up exciting possibilities in various fields, from gaming to virtual reality and streaming. Imagine being able to generate a dynamic game scene in real-time or even create custom video content using voice commands and actions. The potential applications of CausVid are vast, with the model poised to redefine how video content is created and consumed.

The development of CausVid marks a major breakthrough in video generation, promising to bring about real-time interaction and a host of new capabilities for industries and creators alike.

For more information about CausVid, visit the official project page: https://causvid.github.io/

Key Points

- CausVid achieves a first-frame delay of just 1.3 seconds and generates video at 9.4 frames per second.

- The model uses a causal generation method to predict the next frame, reducing computational overhead.

- Asymmetric distillation, ODE initialization, and KV caching are key technologies enabling CausVid’s speed and stability.

- CausVid supports text-to-video, image-to-video, and video-to-video conversion with low latency.

- The model promises to revolutionize industries like gaming, virtual reality, and streaming by enabling real-time video creation.